Overview

- The “Upper Paleolithic revolution” once appeared to mark an abrupt explosion of modern behavior in Europe around 40–45 thousand years ago, but African evidence now pushes the origins of symbolic thought back to at least 100,000 years ago, and possibly 164,000 years ago at Pinnacle Point, indicating that modern cognition emerged gradually on the African continent long before it appeared in Europe.

- Key African evidence includes ochre-processing kits and engraved geometric designs from Blombos Cave (~75–100 kya), perforated shell beads from Morocco (~82 kya) and Israel (~100–135 kya), bone harpoons from Katanda (~90 kya), and systematic pigment use at Pinnacle Point (~164 kya) — together demonstrating that behavioral modernity is an African Middle Stone Age phenomenon, not a European Upper Paleolithic one.

- Joseph Henrich’s cultural brain hypothesis offers an alternative to biological explanations for the apparent late flowering of cultural complexity: population size and social network density, not neurological change, determine the rate of cultural innovation, meaning that the same cognitive hardware can produce vastly different cultural outputs depending on demographic conditions.

Few questions in paleoanthropology have generated more debate than the nature and timing of the cognitive transition that gave rise to fully modern human thought. The capacity for symbolic reasoning—the ability to represent absent or abstract things through arbitrary signs shared by a community of minds—underlies language, art, religion, and the cumulative cultural inheritance that distinguishes Homo sapiens from every other species. Yet the archaeological signature of this capacity in the prehistoric record is neither uniform across time nor evenly distributed across geography, and interpreting the pattern has required researchers to disentangle biological evolution, demographic change, and the self-amplifying dynamics of cultural transmission.5

For much of the twentieth century, the emergence of modern human cognition was associated with a dramatic and apparently rapid cultural transformation in Europe between roughly 40,000 and 45,000 years ago—a period in which the continent’s archaeological record shifts from the Mousterian technology of the Neanderthals to the blade-based, ornament-rich, artistically prolific cultures of the Upper Paleolithic. This transition, sometimes called the “human revolution” or the “great leap forward,” appeared to represent a cognitive watershed without clear precursor. Since the 1990s, however, the accumulation of African Middle Stone Age evidence has fundamentally reframed the question: the European transformation now looks less like the origin of modern cognition and more like its arrival in a new geographic theater, following a long incubation on the African continent.5, 9

The African Middle Stone Age and the real origin of modern cognition

The Middle Stone Age (MSA) of Africa, spanning roughly 280,000 to 30,000 years ago, is now recognized as the crucible of modern behavioral complexity. The landmark synthesis by Sally McBrearty and Alison Brooks, published in 2000, catalogued the African MSA evidence systematically and demonstrated that virtually every category of behavior once considered diagnostic of the European Upper Paleolithic had African antecedents stretching back two to three times further in time. These included blade and microlithic technology, long-distance raw material procurement, projectile technology, fishing, mining, and personal ornaments—all appearing sporadically in Africa before 100,000 years ago and accumulating gradually rather than emerging in a single creative burst.5 The implication was stark: the “revolution” was a European illusion produced by a combination of research bias, taphonomic filtering of the older African record, and the demographic conditions under which cultural complexity either accumulates or disperses.

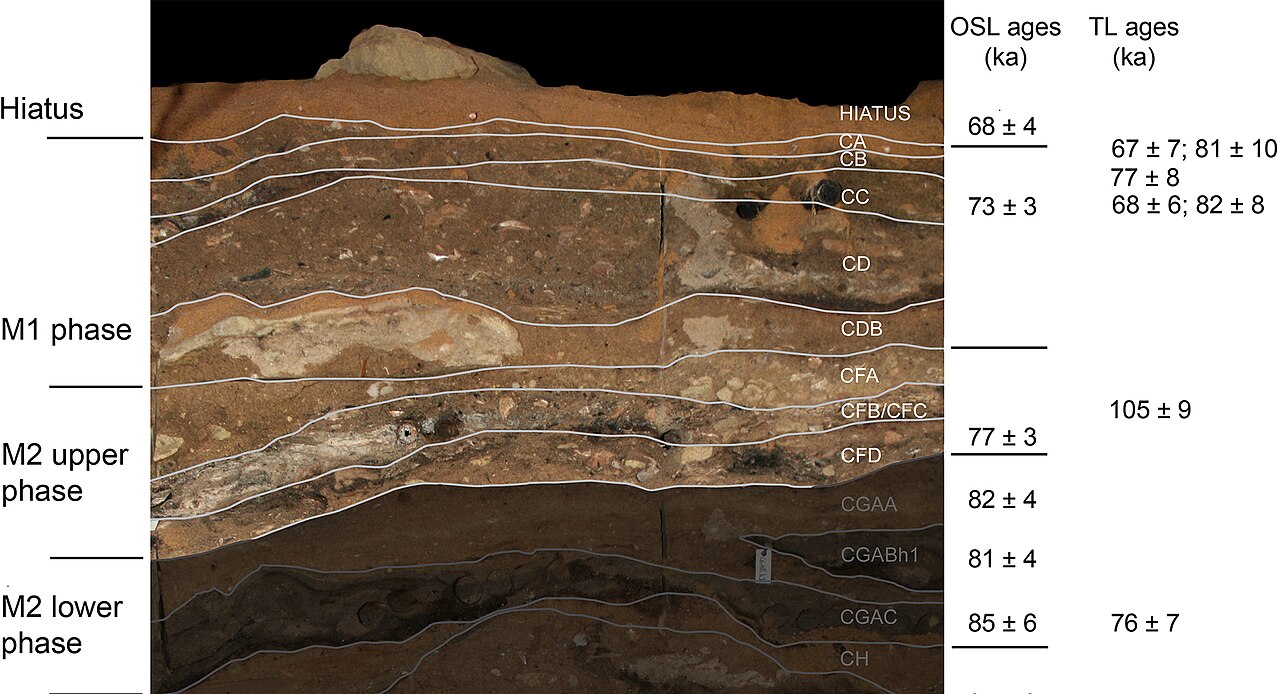

Some of the most compelling African MSA evidence comes from the southern Cape coast of South Africa. At Pinnacle Point Site 13B, excavations led by Curtis Marean recovered large quantities of deliberately processed red ochre in assemblages dated by optically stimulated luminescence to approximately 164,000 years ago—among the earliest evidence of systematic pigment use by anatomically modern humans, and a date that precedes the supposed European revolution by more than 120,000 years.6, 7 The site also contains evidence of shellfish exploitation during periods of cold, productive upwelling, suggesting a population of coastal foragers with the dietary flexibility and ecological intelligence associated with cognitively sophisticated planning.7

At Katanda on the Semliki River in what is now the Democratic Republic of Congo, John Yellen and colleagues excavated a series of sites yielding elaborately worked bone harpoon points in stratigraphic contexts dated to approximately 90,000 years ago. The harpoons are complex, standardized tools requiring sustained planning, fine motor control, and knowledge transmission across generations—properties that imply the existence of a cultural system capable of maintaining and transmitting complex technical knowledge through time.8 Their age substantially predates any comparable bone tool technology from Europe or the Levant and constitutes one of the clearest early signatures of modern behavioral complexity in the African record.

Blombos Cave and the material record of symbolic thought

No single site has done more to transform understanding of the deep history of human cognition than Blombos Cave, situated on a sea-facing cliff approximately 300 kilometers east of Cape Town on the southern Cape coast of South Africa. Excavations led by Christopher Henshilwood have produced a remarkable sequence of MSA deposits bearing two categories of symbolic material that have become central to debates about behavioral modernity.

The first is engraved ochre. Pieces of red ochre bearing deliberately incised geometric patterns have been recovered from Blombos Cave levels dated to approximately 75,000–80,000 years ago. The most celebrated specimen bears a design of parallel diagonal lines intersected by a longer line, creating a regular cross-hatched pattern whose execution required prior surface preparation and sustained attentional control. A second, more complex engraved piece described by Henshilwood and colleagues in 2009 displays a pattern of such precision and internal symmetry that it invites comparison with formal graphic conventions.2, 19 These engravings are significant not merely as early art but as evidence of a capacity to conceive of a surface as a space for deliberate geometric inscription—a conceptual operation that underlies map-making, notation, and eventually writing.

The second category is personal ornaments. Perforated marine gastropod shells of the species Nassarius kraussianus, recovered from MSA levels at Blombos Cave dated to approximately 75,000 years ago, show consistent perforation patterns, wear traces consistent with suspension on a cord, and ochre residues suggesting contact with pigment-bearing body parts or materials.3 The shells were transported to the cave from coastal sources at least 20 kilometers distant, confirming deliberate collection. Personal ornaments are particularly valuable symbolic indicators because their communicative function—signaling identity, group affiliation, or status to observers—presupposes shared symbolic conventions within a social community.

A third discovery at Blombos Cave deepens the picture further. In 2011, Henshilwood and colleagues reported two ochre-processing kits from levels dated to approximately 100,000 years ago. Each kit comprised an abalone shell used as a mixing bowl, ochre, charcoal, crushed bone, and stone grinders. The kits preserve evidence of a multi-step manufacturing process in which ingredients were combined in a specific sequence to produce a compound likely used for body or surface painting.1 The deliberate recipe, the use of containers, and the structured sequence of operations imply planning depth, knowledge transmission, and the preservation of learned procedures across time—all hallmarks of cognitively modern behavior. Taken together, the Blombos Cave sequence documents a repertoire of symbolic and technically complex behaviors at a date that comfortably predates the European Upper Paleolithic by tens of thousands of years.

Revolution or gradual emergence?

The framing of cognitive evolution as a “revolution” carries theoretical as well as empirical stakes. The original human revolution hypothesis, developed most influentially by Paul Mellars and Chris Stringer in the late 1980s, proposed that the Upper Paleolithic transition in Europe represented a genuine behavioral watershed driven by a biological change—possibly a neurological reorganization or a genetic mutation affecting language capacity—that occurred in the ancestors of modern Europeans or in the African population that gave rise to them shortly before 50,000 years ago.9 On this model, the cognitive difference between a Neanderthal and a Cro-Magnon was not merely quantitative but qualitative: a difference in kind rather than degree.

The gradualist counterargument, advanced by McBrearty and Brooks and subsequently supported by the ongoing expansion of the African MSA record, holds that there is no identifiable moment of cognitive revolution—only an uneven, geographically dispersed, and temporally extended accumulation of behavioral innovations across the African Middle Stone Age, followed by their more consistent expression as populations grew and social networks expanded in the Later Stone Age and Upper Paleolithic.5 On this reading, the apparent abruptness of the European record is an artifact of the fact that European populations derived from a subset of African populations already carrying the full behavioral repertoire; the transition to the Upper Paleolithic in Europe reflects replacement and cultural diffusion, not cognitive evolution in situ.

A third position, perhaps closest to the current consensus, holds that the cognitive hardware for symbolic thought was fully assembled in Homo sapiens by at least 100,000 years ago—and possibly much earlier—but that its expression in the archaeological record was modulated by demographic and ecological variables. Small, dispersed populations lose knowledge faster than they gain it; connected, larger populations can sustain complex technologies and build cumulatively on inherited cultural capital. On this view, the African MSA and the European Upper Paleolithic do not represent different cognitive stages but different demographic regimes operating on the same underlying cognitive architecture.9, 10

The question of language

Language sits at the heart of debates about the cognitive revolution because fully syntactic, compositional language is both the most powerful symbolic system humans possess and among the most difficult to detect archaeologically. Unlike ochre engravings or shell beads, language leaves no direct physical trace: its existence must be inferred from the complexity of cultural products whose production and transmission would have required it, from anatomical structures associated with speech production, and from genetic evidence bearing on the evolution of language-related neural circuits.14

The question of when fully modern language emerged divides into at least two separable issues: the evolution of the anatomical prerequisites for articulate speech and the evolution of the cognitive capacity for syntax. The fossil record shows that the hyoid bone—which supports the muscles involved in speech production—had achieved a modern configuration in Neanderthals by at least 60,000 years ago, suggesting that the vocal tract morphology necessary for a broad range of speech sounds was in place well before the Upper Paleolithic. The FOXP2 gene, associated with the fine motor control required for articulate speech, had reached its human-specific form in both Homo sapiens and Neanderthals, though the functional significance of this shared derived state remains debated.16

Marc Hauser, Noam Chomsky, and Tecumseh Fitch argued in an influential 2002 paper that the distinguishing feature of human language is a capacity for recursive syntax—the ability to embed phrases within phrases to generate an unlimited range of meanings from a finite set of elements—and that this capacity may have evolved in the human lineage uniquely and relatively recently, possibly as a by-product of capacities for other forms of hierarchical thinking.14 If this is correct, the evolution of syntax may have been the specific cognitive novelty that enabled the cultural accumulation visible in the archaeological record after roughly 100,000 years ago. However, the recursive hypothesis remains contested: other researchers argue that protolanguage with limited syntax would have been sufficient to initiate cultural accumulation, and that the transition to full syntax may have been gradual rather than saltational.15 The honest position, reflected in the review by Francesco d’Errico and Chris Stringer, is that language evolution cannot be directly dated from the archaeological or fossil record; the symbolic artifacts of the African MSA are consistent with fully modern language but do not require it, and the question of precisely when syntactic language emerged remains genuinely open.16

The cultural brain hypothesis and demographic explanations

One of the most influential frameworks for understanding the uneven trajectory of cultural complexity in human prehistory is the cultural brain hypothesis developed by Joseph Henrich and colleagues. The core insight is that human beings are distinguished from other animals not primarily by individual intelligence but by their dependence on cumulative cultural learning—the capacity to acquire, store, and build upon the skills, knowledge, and tools developed by previous generations. This dependence creates a dynamic in which the size and connectivity of a social network directly determines the rate at which cultural innovations accumulate and persist.10

Henrich’s model makes a counterintuitive prediction: all else being equal, larger and more connected populations will maintain more complex technologies than smaller and more isolated ones, even if individual cognitive capacity is identical across the groups. The reason is that complex skills are imperfectly transmitted between individuals; each transmission step introduces some probability of degradation. In a large, well-connected network, innovations spread rapidly and the probability that any given skill will be lost approaches zero; in a small, isolated group, even skills that exist in the population can be irretrievably lost when the individuals who possess them die without adequately transmitting them.9, 10 This model has been tested against the ethnographic record, and Kline and Boyd’s analysis of Oceanic island populations showed that toolkit complexity scales positively with inter-island contact rate, a proxy for network connectivity, rather than with population size alone—a finding consistent with Henrich’s framework.17

Applied to the paleoanthropological record, the cultural brain hypothesis offers an explanation for the mosaic and episodic character of African MSA behavioral complexity that does not require positing biological evolution of cognitive capacity at multiple points in time. Periods of behavioral florescence—such as the richly symbolic Howiesons Poort industry at around 65,000–60,000 years ago, followed by an apparent simplification of the cultural record—may reflect demographic expansions and contractions driven by climate rather than cognitive advance and retreat. The genuine “revolution” on this account was not neurological but demographic: the crossing of population and connectivity thresholds at which cumulative cultural evolution became self-sustaining. The African populations that dispersed into Eurasia after approximately 70,000 years ago apparently carried a cultural package whose complexity was maintained and amplified by the demographic expansion that followed, producing the relatively continuous and dense record of behavioral modernity visible in the European Upper Paleolithic.10, 18

Cave art and the explosion of visual culture

The visual art of the European Upper Paleolithic remains among the most striking expressions of modern human cognition in the prehistoric record. The Chauvet Cave in the Ardèche region of southern France, discovered in 1994, preserves an astonishing suite of paintings depicting lions, rhinoceroses, mammoths, bears, horses, and other animals executed with technical sophistication that matches or exceeds later Paleolithic cave art. Radiocarbon dates on charcoal from the paintings and from the cave floor date the Chauvet imagery to approximately 36,000–32,000 years ago, making it among the oldest dated figurative art in Europe.13 The artists used shading, perspective cues, and sequential figures to suggest movement—a set of representational conventions that implies a highly developed spatial cognition and a capacity for visual narrative.

The geographic expansion of cave art evidence beyond Europe has significantly complicated the long-held assumption that figurative art originated in western Europe. In 2014, Maxime Aubert and colleagues published uranium-series dates for cave art from the island of Sulawesi in Indonesia, obtaining minimum ages of approximately 35,400 years for hand stencils and 35,700 years for a babirusa figure—dates contemporaneous with the oldest European cave art.12 A subsequent study by Brumm and colleagues in 2021 pushed the Sulawesi dates further back, reporting a minimum age of approximately 45,500 years for a figurative scene depicting warty pigs, which would make it among the oldest known figurative art anywhere in the world.11 The Sulawesi evidence is crucial because it implies that the artistic capacity visible in European Upper Paleolithic caves was carried out of Africa by the dispersing populations that colonized Southeast Asia, not invented independently in Europe. Cave art is accordingly not a marker of European cognitive uniqueness but a globally distributed expression of the symbolic capacity that early human art had been expressing in non-figurative forms in Africa for tens of thousands of years prior.

The question of what drove the cave art explosion—whether it reflects a cognitive threshold, a demographic amplification, a ritual intensification in response to environmental stress, or some combination of these—has no settled answer. What is clear is that figurative cave art is cognitively demanding in specific ways: it requires the ability to hold a three-dimensional mental model of an animal and project it onto a two-dimensional surface, to plan the execution of an image across time, and to share a convention of pictorial representation with other members of a social group. These demands implicate virtually the entire suite of cognitive capacities that define modern human thought, and the consistent quality of the earliest known figurative art—from Sulawesi to Chauvet—suggests that by the time figurative art appears in the record, the cognitive infrastructure for it had already reached full maturity.11, 13

Cognitive capacity and cultural complexity

The relationship between cognitive capacity and cultural output is not linear, and this non-linearity is perhaps the most important lesson of the cognitive revolution debate. If the cultural brain hypothesis is correct, then the same neurological endowment can produce radically different levels of cultural complexity depending on the size and connectivity of the social network in which it is embedded. A cognitively modern individual in a small, isolated band of thirty people, losing knowledge to mortality faster than it can be acquired and transmitted, might produce a material culture indistinguishable from that of a cognitively less sophisticated population. The same individual in a large, connected regional network, accumulating innovations across generations on a foundation of reliably transmitted cultural inheritance, might produce technologies and symbolic systems of extraordinary complexity.9, 10

This framework has implications for how researchers interpret the uneven distribution of behavioral complexity in the African MSA. The oscillating record—in which episodes of remarkable symbolic and technological sophistication are followed by apparent simplifications before re-emerging in later assemblages—may not reflect oscillating cognitive capacity but oscillating demography. The Last Interglacial period around 125,000 years ago and the periods of relative climatic stability in the African Middle Pleistocene may have supported larger, more connected populations capable of maintaining complex cultural systems; the intervening periods of aridity and fragmentation may have reduced population sizes and network connectivity below the thresholds at which complex traditions remain stable.5, 10

Wynn and Coolidge have argued from a cognitive archaeology perspective that the key neurological innovation enabling modern cultural complexity was an enhancement of working memory—specifically the capacity for extended event memory and the ability to simulate future scenarios in detail. On their account, this enhancement, probably achieved through modifications to prefrontal cortical circuits, enabled the planning depth required for complex tool manufacture, long-distance logistical organization, and the construction of symbolic narratives.20 Whether such an enhancement occurred as a single genetic event, a gradual accumulation of polygenic change, or an emergent property of brain-environment interaction during development remains unknown. What the convergence of archaeological, genetic, and cognitive evidence does suggest is that by 100,000 years ago, and possibly considerably earlier, the biological substrate of modern human cognition was in place—and that the subsequent history of cultural complexity is primarily a story of demography, ecology, and the self-amplifying dynamics of cumulative cultural evolution rather than of further biological change.5, 10, 20

References

The revolution that wasn’t: a new interpretation of the origin of modern human behavior

Pigment use in the Middle Stone Age at Pinnacle Point Site 13B, Mossel Bay, South Africa

Early Homo sapiens with modern human upper facial morphology evolved in a South African refugium

Bone harpoons and the evolution of fishing technology in the Middle Stone Age of Africa

The human revolution: behavioural and biological perspectives on the origins of modern humans

The secret of our success: how culture is driving human evolution, domesticating our species, and making us smarter

Radiocarbon dating of the Chauvet Cave: further results and implications for the origins of art in Europe

Rethinking the human revolution: new behavioural and biological perspectives on the origin and dispersal of modern humans

The emergence of modern human behavior: Middle Stone Age engravings from South Africa

How similar are the cognitive architectures of Neanderthals and behaviourally modern humans?