Overview

- Human language is distinguished from all other animal communication systems by its recursive syntax, displacement (the ability to refer to things not present), and open-ended productivity, properties that together enable the expression of an effectively infinite range of meanings from a finite set of elements.

- The biological infrastructure for speech includes a descended larynx, precise neural control of the tongue and breathing, specialized cortical areas (Broca's and Wernicke's), and genes such as FOXP2 whose derived human variants are essential for the fine motor sequencing that spoken language demands.

- The world's approximately 7,000 living languages are grouped into several hundred families, yet all share deep structural universals — including noun-verb distinction, negation, and recursion — suggesting that language capacity is a species-wide biological endowment shaped by natural selection over at least several hundred thousand years.

Human language is the primary means by which members of the species Homo sapiens communicate complex thought, and it stands as one of the most distinctive attributes of the human lineage. Unlike the communication systems of other animals, which are typically limited to a fixed repertoire of signals tied to immediate stimuli, human language is characterized by an open-ended capacity to generate novel utterances, to refer to objects and events remote in time and space, and to embed clauses within clauses in a recursive hierarchy of meaning.1, 2 These properties enable humans to transmit accumulated knowledge across generations, coordinate collective action among large groups, and construct the elaborate cultural systems that define every known human society. The study of language spans linguistics, neuroscience, genetics, anthropology, and evolutionary biology, and touches on foundational questions about the nature of the human mind.

Approximately 7,000 languages are spoken in the world today, organized into several hundred language families, yet all share deep structural regularities that point to a common biological foundation.16, 12 How this capacity evolved, what neural and genetic machinery underlies it, why languages diversify and change, and how language shapes human cognition are among the most actively investigated questions in the human sciences.

Design features of human language

Linguists and cognitive scientists have identified several properties that, taken together, distinguish human language from all known animal communication systems. The linguist Charles Hockett enumerated a set of "design features" in the 1960s, and subsequent theoretical work, especially the influential framework proposed by Hauser, Chomsky, and Fitch in 2002, has refined the list to a small number of core capacities.1, 2

Discreteness and duality of patterning. Human language operates on two levels simultaneously. At the lower level, a small inventory of meaningless sound units (phonemes) — roughly 20 to 60 per language — can be combined according to language-specific rules to produce an enormous number of meaningful words (morphemes and lexemes). English, for example, has roughly 40 phonemes but over 170,000 words in current use. This duality of patterning gives language extraordinary combinatorial power from minimal raw material.2, 3

Productivity. Speakers routinely produce and understand sentences they have never encountered before. This open-endedness, sometimes called productivity or creativity, arises from the rule-governed combination of discrete elements and distinguishes language from the closed call systems of vervet monkeys or the waggle dance of honeybees, in which each signal has a fixed meaning.1

Displacement. Humans can use language to refer to things that are not present in the immediate environment — past events, future plans, hypothetical scenarios, abstract concepts, and entities that exist only in the imagination. While a few animal communication systems exhibit limited displacement (honeybee dances encode the distance and direction of a food source the dancer has visited), only human language allows displacement across unlimited temporal and spatial scales and into entirely fictional or abstract domains.2

Recursion. Perhaps the most theoretically significant property of human language is its recursive syntax: the ability to embed a phrase or clause within another phrase of the same type, yielding sentences of potentially unbounded complexity. The sentence "The dog that chased the cat that ate the fish ran away" embeds a relative clause within a relative clause, and this embedding can in principle be extended indefinitely. Hauser, Chomsky, and Fitch proposed in 2002 that recursion may be the sole component of the language faculty that is unique to humans and unique to language, an influential but contested hypothesis.1

The anatomy of speech

Spoken language, the modality used by the vast majority of the world's languages, depends on a suite of anatomical adaptations in the vocal tract, the respiratory system, and the nervous system that are absent or rudimentary in other primates.6, 2

The most discussed anatomical specialization is the position of the larynx. In adult humans, the larynx sits lower in the throat than in other mammals of comparable body size, creating an expanded pharyngeal cavity above the vocal folds. This descended larynx, first emphasized by Philip Lieberman and Edmund Crelin in 1971, enlarges the resonating space available for shaping vowel sounds and is thought to be essential for producing the full range of vowel contrasts found in human speech.6 However, subsequent research by Fitch and Reby showed that a descended larynx also occurs in some non-human species (including red deer and certain primates) for purposes unrelated to speech, suggesting that laryngeal descent alone does not explain the emergence of language.7

Equally important is the fine neural control of the tongue, which is the primary articulator in speech production. The human hypoglossal nerve, which innervates the tongue muscles, is substantially larger relative to body size than in any other primate, reflecting the exceptional precision required for the rapid articulatory gestures of speech — movements that can exceed 15 distinct configurations per second in normal conversation.2 Humans also possess uniquely precise control over respiration: unlike other great apes, which breathe in a pattern dominated by rib cage movement, humans can independently control the diaphragm, intercostal muscles, and abdominal muscles to modulate subglottal air pressure with the fine temporal resolution that continuous speech demands.2

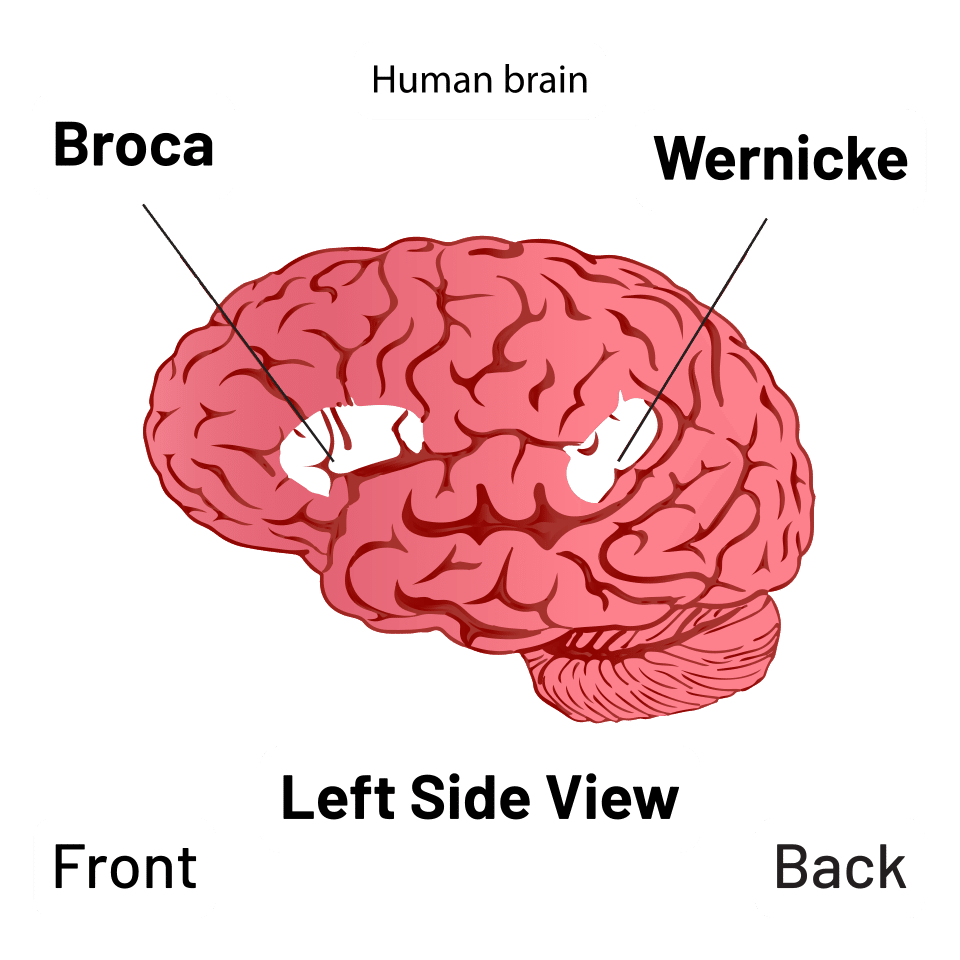

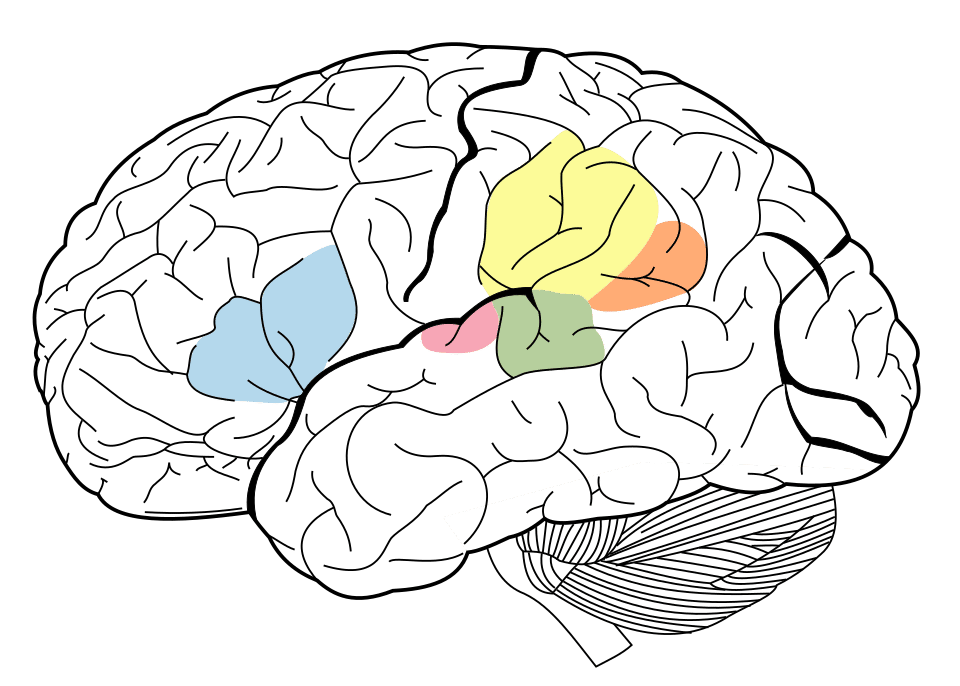

In the brain, two cortical regions have been recognized since the nineteenth century as critical for language. Broca's area, located in the left inferior frontal gyrus, is involved in speech production, syntactic processing, and the planning of articulatory sequences. Wernicke's area, in the left posterior superior temporal gyrus, is involved in speech comprehension and the retrieval of word meanings. Damage to Broca's area produces non-fluent aphasia (halting, effortful speech with relatively preserved comprehension), while damage to Wernicke's area produces fluent but often meaningless speech with severely impaired comprehension.22, 2 Modern neuroimaging has revealed that language processing is distributed across a far broader network than these classical areas, involving prefrontal, temporal, and parietal cortices in both hemispheres, but the lateralization of core language functions to the left hemisphere remains one of the most robust findings in human neuroscience.20

FOXP2 and the genetics of language

The discovery of the gene FOXP2 in 2001 marked a turning point in the molecular investigation of language. Researchers studying the KE family, a multigenerational London family in which approximately half the members suffered from a severe speech and language disorder characterized by difficulty producing rapid sequences of orofacial movements (developmental verbal dyspraxia), identified a point mutation in FOXP2 — a gene encoding a transcription factor on chromosome 7 — as the cause of the disorder.4 Affected family members had difficulty not only with speech articulation but also with grammatical processing, particularly the application of morphological rules, suggesting that FOXP2 plays a role in the neural circuits underlying both the motor and the computational aspects of language.8

Comparative genomic analyses subsequently revealed that the human version of FOXP2 differs from the chimpanzee version by two amino acid substitutions, and that these substitutions were fixed in the human lineage within the past several hundred thousand years, after the divergence from the common ancestor with chimpanzees.5 The derived human variant was also found in Neanderthal DNA extracted from fossils in El Sidrón, Spain, suggesting that the FOXP2 mutations predated the split between modern humans and Neanderthals and may have been present in their common ancestor roughly 300,000 to 400,000 years ago.24

FOXP2 is emphatically not "the language gene." Language is a complex trait depending on many genes acting across developmental time. But FOXP2 remains the best-studied genetic contributor to the neural infrastructure of speech, and its discovery demonstrated that the biological substrate of language is amenable to molecular investigation.8, 2

Theories of language origin

The evolutionary origin of language remains one of the most debated questions in science, in large part because language leaves no direct fossil record: sounds do not fossilize, and the archaeological proxies for linguistic behavior (symbolic artifacts, complex tool manufacture, long-distance trade networks) are indirect and ambiguous. Three broad families of theories have dominated the discussion.2, 10

Gestural-origin theories propose that language began as a system of manual gestures, building on the observation that great apes use intentional, flexible gestures to communicate while their vocalizations are largely involuntary and affective. In this view, the transition from gesture to speech occurred gradually as vocal accompaniments to gestures became increasingly conventionalized, eventually supplanting gesture as the primary channel. The existence of fully grammatical sign languages, which are processed by the same neural circuits as spoken languages, supports the plausibility of a gestural precursor.10, 20

Vocal-origin theories argue that language evolved directly from ancestral primate vocalizations, which became increasingly voluntary, learned, and combinatorial under selection pressures related to social bonding, cooperative hunting, or tool manufacture. A key challenge for these theories is explaining how the transition occurred from involuntary, subcortically controlled calls to the voluntary, cortically controlled speech of humans.2

Musical protolanguage theories, most fully articulated by Steven Mithen, propose that an intermediate stage between animal communication and modern language was a holistic, musical, and emotionally expressive vocal system — a "Hmmmm" system (Holistic, Manipulative, Multi-modal, Musical, and Mimetic) — that conveyed emotional and social meaning through pitch, rhythm, and melody before the emergence of referential words and compositional syntax.11 This hypothesis draws support from the deep emotional power of music, the universality of infant-directed speech (which is melodically exaggerated), and the observation that musical and linguistic processing share overlapping neural substrates.11, 2

Current evidence increasingly favors a gradual, incremental emergence of language over hundreds of thousands of years rather than a sudden appearance, though the question remains far from settled.2

Language universals

Despite the extraordinary surface diversity of the world's languages, systematic cross-linguistic comparison has revealed a substantial number of structural properties that recur across unrelated language families and geographical regions. These language universals were first catalogued in a landmark 1963 volume edited by Joseph Greenberg, who identified 45 implicational universals — statements of the form "if a language has property X, it also has property Y."12

Among the most robust universals are: every language distinguishes nouns from verbs; every language has a way to negate propositions; every language has mechanisms for asking questions, issuing commands, and making declarative statements; every language has pronouns with at least a first-person and a second-person distinction; and every language permits the formation of relative clauses, enabling speakers to modify a noun with a clausal description.12, 3 Greenberg also identified strong statistical tendencies in word order: languages in which the verb precedes the object (VO languages) tend to use prepositions, while languages in which the object precedes the verb (OV languages) tend to use postpositions.12

The World Atlas of Language Structures (WALS), a comprehensive database documenting structural features across more than 2,600 languages, has greatly expanded the empirical basis for studying universals and near-universals.13 The existence of these deep regularities has been interpreted in two ways. Nativist linguists, following Noam Chomsky, argue that universals reflect an innate Universal Grammar — a genetically specified set of abstract principles and parameters that constrain the form any human language can take.9, 23 Functionalist and usage-based linguists counter that universals emerge from domain-general cognitive constraints (memory, processing efficiency, learnability) and from the communicative pressures that all languages face.3 Both perspectives agree, however, that universals point to a shared biological endowment for language that is uniform across the species.

Word order typology across the world's languages13

Language families and diversification

The world's approximately 7,000 living languages are not independent inventions but are organized into families of languages descended from common ancestors, much as biological species descend from shared ancestral populations. A language family is established through the comparative method, which identifies systematic sound correspondences between words of similar meaning in different languages, reconstructs the ancestral forms (proto-language), and demonstrates that the similarities are too regular and too numerous to be explained by chance or borrowing.14

Six language families account for the vast majority of the world's speakers. Indo-European, with roughly 3.2 billion native speakers, encompasses languages from English, Spanish, and Hindi to Russian, Greek, and Persian, and traces back to a proto-language spoken perhaps 6,000 to 8,000 years ago, likely in the Pontic-Caspian steppe or Anatolia.15, 14 Sino-Tibetan, with approximately 1.3 billion speakers, includes Mandarin Chinese, Cantonese, Burmese, and Tibetan. Niger-Congo, the largest family by number of languages (over 1,500), dominates sub-Saharan Africa and includes the vast Bantu sub-family. Afroasiatic spans North Africa and the Middle East and includes Arabic, Hebrew, Amharic, Hausa, and the ancient Egyptian language. Austronesian, with over 1,200 languages spread from Madagascar to Hawaii, represents one of the most geographically extensive language dispersals in history. Dravidian, with roughly 250 million speakers, is concentrated in southern India.16, 21

Languages diversify through the same basic mechanism as biological species: when a speech community is divided by geographical, social, or political barriers, the separate populations accumulate independent innovations in pronunciation, vocabulary, and grammar until mutual intelligibility is lost. The rate of linguistic change varies, but the comparative method reaches its practical limit at roughly 8,000 to 10,000 years, beyond which sound correspondences become too eroded to detect reliably.14 Computational phylogenetic methods adapted from evolutionary biology have provided quantitative estimates of divergence times for several major families, complementing and sometimes challenging traditional linguistic chronologies.15

Language endangerment is a pressing concern. Of the world's approximately 7,000 languages, roughly 40 percent are classified as endangered, and linguists estimate that one language falls silent approximately every two weeks. The vast majority of languages have fewer than 10,000 speakers, and many have fewer than 100. The loss of a language represents an irreversible loss of cultural knowledge, oral literature, and a unique window into the range of possible human cognitive and grammatical systems.16

Writing systems

Writing is a technology for encoding language in a durable visual medium, and its invention — which occurred independently at least three times in human history — ranks among the most consequential developments in the history of civilization. Unlike spoken language, which is a biological capacity present in every normal human, writing is a cultural invention that must be explicitly taught and learned, and for most of the approximately 300,000-year history of Homo sapiens, all communication was oral.17, 18

The earliest known writing system is proto-cuneiform, which appeared in southern Mesopotamia (modern Iraq) around 3400 to 3100 BCE in the context of temple administration at Uruk. These earliest texts are primarily accounting records — lists of commodities, quantities of grain, and numbers of livestock — and used pictographic signs impressed into clay tablets. Over the following centuries, the signs became increasingly abstract and stylized into the wedge-shaped marks characteristic of cuneiform, and the system expanded from a bookkeeping tool into a full writing system capable of recording narrative, law, literature, and science.18

Egyptian hieroglyphs emerged at roughly the same time or slightly later, around 3200 BCE, and may have been independently invented or inspired by the Mesopotamian example. Chinese script, attested from the Shang dynasty oracle bone inscriptions of approximately 1200 BCE, represents a clearly independent invention. Mesoamerican writing, including the Maya script and earlier Zapotec and Olmec inscriptions, constitutes yet another independent tradition, with origins possibly as early as 600 BCE.17

Writing systems are classified by the size of the linguistic unit each symbol represents. Logographic systems (such as Chinese characters) use symbols that represent entire morphemes or words. Syllabaries (such as Japanese kana or the Cherokee syllabary created by Sequoyah in the 1820s) use symbols representing syllables. Alphabets (such as the Latin, Greek, Cyrillic, and Arabic scripts) use symbols representing individual consonant and vowel phonemes. Abjads (such as the Hebrew and Arabic scripts in their unvoweled forms) represent primarily consonants, leaving vowels to be inferred from context. Abugidas (such as the Devanagari script used for Hindi and Sanskrit) represent consonant-vowel units with a default vowel that can be modified by diacritical marks.17 In practice, most scripts are mixed systems that combine elements of more than one type.

Language and cognition

The relationship between language and thought has been debated for centuries, and remains one of the most actively investigated topics in cognitive science. The strongest version of the hypothesis — that the language one speaks determines the thoughts one can think, often attributed to Benjamin Lee Whorf and known as linguistic determinism — is not supported by modern evidence. Speakers of languages that lack a dedicated word for a concept can still perceive and reason about that concept.19, 23

A weaker version, known as linguistic relativity, holds that language influences habitual patterns of thought without strictly determining them. Experimental evidence has accumulated showing that speakers of different languages do exhibit measurable differences in how they attend to and remember certain features of experience. Languages differ in how they partition the color spectrum, encode spatial relations, grammaticalize evidentiality (the source of a speaker's knowledge), and mark temporal distinctions, and these differences correlate with subtle cognitive biases in non-linguistic tasks.19, 3

The study of sign languages has provided especially powerful evidence that language is fundamentally a cognitive capacity rather than a speech capacity. Sign languages such as American Sign Language (ASL), British Sign Language (BSL), and the many other signed languages used by deaf communities worldwide are fully grammatical languages with phonology, morphology, syntax, and all the design features of spoken languages, including recursion and displacement. They are processed by the same left-hemisphere cortical areas (Broca's and Wernicke's regions) as spoken languages, and damage to these areas in deaf signers produces aphasic deficits that closely parallel those seen in hearing speakers.20 The existence of sign languages demonstrates that the human language faculty is modality-independent: it can operate through any sensorimotor channel — vocal-auditory, manual-visual, or even tactile — that provides sufficient bandwidth for the transmission of discrete, combinatorial signals.20, 1

Language also serves as a window into the architecture of the human mind more broadly. The speed with which children acquire their native language — mastering the core grammar by age four or five without explicit instruction — has been taken as evidence for an innate biological endowment for language, what Chomsky termed the Language Acquisition Device and Pinker called the "language instinct."23, 9 The universality of this developmental trajectory across cultures, and the existence of critical or sensitive periods beyond which full native-like acquisition becomes difficult, reinforce the conclusion that language is not simply a cultural invention but a biological adaptation shaped by natural selection over deep evolutionary time.23, 2