Overview

- The fine-tuning argument contends that certain physical constants and initial conditions of the universe are set within extraordinarily narrow ranges necessary for the existence of complex life, and that this precision is best explained by intentional design rather than by chance alone.

- Critics have advanced several powerful objections, including the multiverse hypothesis (which dissolves the improbability by positing a vast ensemble of universes with varying constants), the normalizability problem (which argues that probability cannot be meaningfully defined over an infinite range of possible constant values), and the anthropic selection effect (which notes that observers can only exist in universes compatible with their existence, making the observation trivially expected).

- The debate remains unresolved and is closely entangled with open questions in physics and cosmology: whether a multiverse exists, whether the constants of nature could genuinely have been different, and whether probability theory can be coherently applied to unique, unrepeatable events like the origin of a universe.

The fine-tuning argument is a contemporary form of the teleological argument that appeals to the apparent fine-tuning of the universe’s physical constants and initial conditions as evidence for the existence of a designer. The argument begins with an empirical observation: many of the fundamental parameters of physics — the cosmological constant, the strength of the strong nuclear force, the masses of elementary particles, the ratio of matter to antimatter, and others — appear to be set within extraordinarily narrow ranges that are necessary for the existence of complex structures, chemistry, and life. Had any of several constants differed by even slight fractions from their actual values, the universe would have been radically inhospitable: stars would not have formed, atoms heavier than hydrogen would not exist, or the universe would have collapsed or expanded too rapidly for any structure to emerge at all. Proponents of the fine-tuning argument contend that this precision calls for an explanation, and that intentional design is the best one available.1, 3, 6

The argument has generated one of the most active debates in contemporary philosophy of religion, drawing on physics, cosmology, probability theory, and the philosophy of science. Critics have responded with a range of objections — from the multiverse hypothesis to the normalizability problem to the anthropic selection effect — and the discussion remains far from settled. What follows is a survey of the argument’s observational basis, its formal structure, the principal objections, and the current state of the debate.18, 20

The observational basis

The empirical foundation of the fine-tuning argument rests on discoveries in twentieth-century physics and cosmology. As physicists developed the Standard Model of particle physics and the standard cosmological model, they identified a set of free parameters — constants whose values are not determined by the theory itself but must be measured experimentally. The question of why these constants take their observed values, rather than some other values, has no known answer within current physics. What has become clear is that many of these values appear to occupy a tiny, life-permitting region within their possible ranges.3, 15

The cosmological constant, denoted Λ, is perhaps the most frequently cited example. This parameter governs the rate of expansion of the universe. Steven Weinberg calculated that if the cosmological constant were positive and more than roughly 10120 times its natural scale as predicted by quantum field theory, the universe would have expanded so rapidly that galaxies, stars, and planets could never have formed. If it were large and negative, the universe would have recollapsed almost immediately. The observed value is astonishingly small relative to theoretical expectations — approximately 10−122 in Planck units — and yet nonzero, a fact that Weinberg described as “the worst prediction in the history of physics.”16, 15

The strong nuclear force provides another striking case. This force binds protons and neutrons together in atomic nuclei. If the strong coupling constant were roughly 2 percent weaker, protons could not bind with neutrons to form deuterium, a prerequisite for stellar nucleosynthesis, and the universe would contain nothing but hydrogen. If it were a few percent stronger, diprotons (bound states of two protons) would be stable, hydrogen would have been rapidly fused in the early universe, and no long-lived stars could exist. In either case, the production of carbon, oxygen, and all heavier elements essential to chemistry and life would be impossible.3, 1

Fred Hoyle’s discovery of the carbon resonance level illustrates the point with particular clarity. In the 1950s, Hoyle predicted that carbon-12 must possess an excited nuclear state at approximately 7.65 MeV, because without such a resonance the triple-alpha process in stellar cores could not produce carbon in sufficient quantities to account for its cosmic abundance. The resonance was subsequently confirmed experimentally. Hoyle himself remarked that a “commonsense interpretation of the facts” suggested that a “superintellect” had “monkeyed with physics.” Whether or not one accepts Hoyle’s interpretation, the case illustrates how narrow the parameter window is for carbon production.1, 4

Additional examples include the ratio of electromagnetic force to gravitational force (approximately 1036), which must be close to its observed value for stars to sustain stable nuclear burning over billions of years; the neutron-proton mass difference, which must fall within a narrow range for both neutrons and protons to exist in stable nuclei; and the initial entropy of the universe, which Roger Penrose estimated must have been fine-tuned to one part in 1010123 to produce a universe with the low-entropy initial conditions necessary for the thermodynamic arrow of time and the formation of structure.21, 3, 15

Selected fine-tuned parameters3, 15, 16

| Parameter | Role | Sensitivity |

|---|---|---|

| Cosmological constant (Λ) | Rate of cosmic expansion | ~1 part in 10120 |

| Strong coupling constant (αs) | Nuclear binding | ~2% variation prohibits complex nuclei |

| Electromagnetic/gravitational ratio | Stellar stability | Small changes prevent stable stars |

| Neutron–proton mass difference | Nuclear stability | ~1 MeV shift eliminates stable matter |

| Carbon-12 resonance level | Stellar carbon production | ~4% shift in strong force eliminates carbon |

| Initial entropy | Structure formation | ~1 in 1010123 (Penrose) |

The argument’s structure

Several philosophers have formulated the fine-tuning argument with varying degrees of precision. The most influential contemporary formulations come from Robin Collins and Richard Swinburne, who cast the argument in probabilistic terms rather than as a deductive proof.

Robin Collins presents the argument using what he calls the “likelihood principle,” drawn from confirmation theory. On this approach, evidence E confirms hypothesis H1 over hypothesis H2 if E is more probable given H1 than given H2. Collins then argues as follows:

P1. The fine-tuning of the universe’s constants and initial conditions is highly improbable under the hypothesis of a single, undesigned universe (the “naturalistic single-universe hypothesis”).

P2. The fine-tuning is not highly improbable under the design hypothesis (since a designer would have reason to create a life-permitting universe).

C. Therefore, by the likelihood principle, fine-tuning provides significant evidence favoring the design hypothesis over the naturalistic single-universe hypothesis.

Collins is careful to note that the argument does not purport to prove God’s existence; it claims only that fine-tuning is a confirming instance for theism relative to naturalistic single-universe scenarios. This framing makes the argument a piece of probabilistic evidence rather than a demonstrative proof.6

Swinburne embeds the fine-tuning argument within a broader cumulative case for theism. In The Existence of God, he employs Bayesian reasoning: given background knowledge and the evidence of fine-tuning, the posterior probability of theism is raised because theism predicts (or at least renders unsurprising) a life-permitting universe, whereas unguided physical processes provide no such expectation. Swinburne argues that God, being omniscient and perfectly good, would have reason to create beings capable of moral and intellectual activity, and that such beings require a stable, law-governed, fine-tuned physical universe. Fine-tuning is thus to be expected on theism and surprising on atheism, yielding a positive confirmation via Bayes’s theorem.5, 12

John Leslie offers a different formulation through his well-known firing-squad analogy. If a prisoner survives a firing squad of fifty expert marksmen who all miss, the prisoner should not simply shrug and say “Well, if they hadn’t all missed, I wouldn’t be here to consider the question.” The survival demands an explanation — either the marksmen deliberately missed, or there were millions of executions and this was the one where they all happened to miss. Analogously, the fine-tuning of the universe demands an explanation, and the two principal candidates are design and a multiverse.14

The multiverse response

The most widely discussed naturalistic response to the fine-tuning argument is the multiverse hypothesis: the proposal that our universe is one of an enormous (perhaps infinite) ensemble of universes, each with different values for its physical constants. If sufficiently many universes exist with sufficiently varied constants, then it is no surprise that at least one of them happens to have life-permitting values — and we necessarily find ourselves in that one.2, 13

The multiverse hypothesis is not merely a philosophical escape hatch; it arises independently within several programs in theoretical physics. Inflationary cosmology, particularly the theory of eternal inflation proposed by Andrei Linde and Alexander Vilenkin, suggests that our observable universe is one “bubble” or “pocket” within a vastly larger inflationary spacetime that continuously spawns new regions with potentially different low-energy physics. String theory, which admits an estimated 10500 or more distinct vacuum states (the “string landscape”), provides a theoretical mechanism by which different pocket universes could realize different values of the constants. Leonard Susskind has argued that the combination of eternal inflation and the string landscape makes the multiverse not a speculative proposal but a consequence of our best physical theories.2, 13

Proponents of the fine-tuning argument have responded to the multiverse in several ways. Collins argues that the multiverse does not eliminate the need for explanation but merely pushes it back: the multiverse-generating mechanism itself must have the right properties to produce universes with varied constants, and those properties may themselves require fine-tuning. Swinburne contends that the multiverse hypothesis is less simple than theism — it posits a vast proliferation of unobservable entities — and that simplicity is a theoretical virtue that should weigh against it in Bayesian reasoning.6, 5

Others have questioned whether the multiverse is genuinely scientific. George Ellis has argued that if other universes are in principle unobservable, the multiverse hypothesis cannot be empirically tested and therefore falls outside the domain of science proper. This does not necessarily make it false, but it does raise questions about its epistemic status relative to the design hypothesis, which also posits an unobservable entity. The debate over the multiverse’s scientific credentials remains active and unresolved.19

The anthropic principle

Closely related to the multiverse response is the anthropic principle, first formally articulated by Brandon Carter in 1973. Carter distinguished between two versions. The weak anthropic principle (WAP) states that the values of physical constants we observe must be compatible with the existence of observers, since observers can only exist in a universe (or region of a universe) that permits their existence. This is essentially a selection effect: we should not be surprised to find ourselves in a life-permitting universe, because we could not find ourselves anywhere else.11, 1

The strong anthropic principle (SAP), as formulated by Carter, makes a bolder claim: the universe must be such as to permit the development of observers at some point in its history. In its original formulation, this was intended as a statement about the necessary conditions for observation rather than a metaphysical claim about cosmic purpose. However, Barrow and Tipler explored several versions of the SAP that carry stronger metaphysical implications, including a “final anthropic principle” that posits the necessity of intelligent information processing persisting indefinitely. These stronger versions have been widely criticized as speculative and unfalsifiable.1, 10

The WAP, by itself, does not resolve the fine-tuning puzzle without an accompanying multiverse. If there is only one universe, the fact that we can only observe life-permitting conditions does not explain why those conditions obtain. The WAP tells us what we should expect to observe given that we exist, but it does not explain why we exist in the first place. Combined with a multiverse, however, the WAP provides a complete explanation: many universes exist with different constants, and the WAP explains why we observe the particular values we do — because they are the ones compatible with observers. Without a multiverse, critics argue, the WAP reduces to the trivial observation that we are here, which is precisely what needs explaining.18, 14

The normalizability objection

One of the most technically formidable objections to the fine-tuning argument comes from Timothy McGrew, Lydia McGrew, and Eric Vestrup, who argue that the probabilities invoked by the argument are ill-defined. The fine-tuning argument requires that we assign a probability to the constants of nature falling within the life-permitting range. To do this, one needs a probability distribution over the possible values of the constants. But what distribution should be used?7

If the possible values of a constant range over all real numbers (or even a very large finite range), and if we have no reason to favor any particular value over any other, the natural choice is a uniform distribution. But a uniform distribution over an infinite range cannot be normalized — that is, it cannot be made to satisfy the requirement that total probability equals one. A distribution that assigns equal probability density to every point on an infinite line assigns zero probability to any finite interval. This means that the probability of the constants falling within the life-permitting range is not merely small but literally undefined. If the probability is undefined, the fine-tuning argument cannot get off the ground, because it depends on the claim that the life-permitting range is improbable.7

Defenders of the fine-tuning argument have responded in several ways. Collins argues that the normalizability problem arises only if one insists on a probability distribution over the entire real line, and that there are principled reasons to restrict the range of possible values. For instance, physical considerations may impose natural upper bounds on the values of constants. If the range is finite, a uniform distribution can be normalized, and the argument proceeds. Collins also appeals to an “epistemically illuminated” range — the range over which we have sufficient understanding of the physics to assess life-permittingness — and argues that fine-tuning is striking even relative to this restricted range.6

The normalizability objection remains influential because it targets the mathematical foundations of the argument rather than any particular empirical claim. If sound, it shows not that fine-tuning is unreal but that it cannot be given the precise probabilistic formulation that the argument requires. The debate continues over whether principled restrictions on the range of possible values can rescue the probabilistic reasoning.18, 7

The single-universe objection

A distinct line of criticism challenges the very coherence of assigning probabilities to the constants of a unique universe. Probability, on most interpretations, applies to repeatable events or to classes of outcomes. But the universe is not a repeatable event; it is a singular occurrence. We have no ensemble of universes from which ours was “selected,” no frequency data to anchor probabilistic claims, and no well-defined mechanism by which the constants were “chosen” from a range of alternatives.8, 18

This objection has roots in broader debates about the interpretation of probability. Frequentists, who define probability as the long-run relative frequency of an outcome in repeated trials, have difficulty applying their framework to one-off events. Bayesians, who interpret probability as a measure of rational credence, face the challenge of justifying a prior distribution over the constants when we have no empirical basis for choosing one prior over another. The fine-tuning argument typically presupposes a Bayesian framework, but the choice of prior — which determines how surprising the observed values are — remains deeply contested.7, 20

Victor Stenger has pressed a related point: that the fine-tuning argument implicitly assumes the constants could have been different, but this assumption may be unwarranted. If the constants are fixed by some deeper physical law that we have not yet discovered, they could not have been otherwise, and the question of their improbability does not arise. A future “theory of everything” might show that the constants are uniquely determined by mathematical consistency, leaving no room for fine-tuning. Whether such a theory is forthcoming remains an open question, but the possibility undercuts the assumption that the constants are free parameters requiring an external explanation.8, 17

The “this universe” objection

A related objection, sometimes called the “this universe” objection or the “lottery analogy,” proceeds as follows. In any lottery, the winning combination of numbers is astronomically improbable in advance. Yet some combination must win, and after the draw, we are not surprised that a particular set of numbers came up — we do not infer that the lottery was rigged simply because the winning numbers were improbable. Analogously, whatever values the constants of nature happen to take would be equally improbable, so the mere improbability of our particular set of values does not demand a special explanation.20, 18

Proponents of the fine-tuning argument reject this analogy by distinguishing between two types of improbable outcomes. In an ordinary lottery, every possible outcome is equally unremarkable — no particular combination of numbers is more interesting or significant than any other. But the fine-tuning case is different: the life-permitting values are a special, independently identifiable subset of the possible values. It is not merely that the observed values are improbable, but that they are improbable and they possess a special property (life-permittingness) that is specifiable independently of the observation. This is the distinction Collins draws between “specified” and “unspecified” improbability. An unspecified improbable event requires no explanation; a specified one does.6, 14

The firing-squad analogy again illustrates the point. Every possible pattern of bullet holes in the wall is equally improbable, but only a very small subset of patterns — those that all miss the prisoner — is independently specifiable as remarkable. Surviving a fifty-person firing squad is not merely improbable; it is improbable in a way that demands an explanation because the outcome is independently significant. Whether the fine-tuning of the constants is analogous to the firing-squad case or to the lottery case remains a central point of contention.14

The selection effect critique

Nick Bostrom has developed a rigorous framework for reasoning about observation selection effects — the systematic biases introduced by the fact that all observations are made by observers who must exist in order to make them. Bostrom argues that many arguments in cosmology, including versions of the fine-tuning argument, fail to properly account for these selection effects.10

The core insight is straightforward. We cannot observe a universe in which we do not exist. This means that any evidence we gather about the universe is necessarily conditioned on the existence of observers. The question is whether this conditioning fully explains the fine-tuning or merely constrains what we can observe. Bostrom argues that the answer depends critically on what background assumptions are in play, including whether a multiverse exists, and that the fine-tuning argument cannot be evaluated in isolation from these assumptions.10, 18

The selection effect critique is related to but distinct from the weak anthropic principle. While the WAP is a general statement about observational constraints, the selection effect critique is a methodological warning about the need to correct for observer selection bias when evaluating probabilistic arguments. Bostrom has shown that failing to make these corrections can lead to fallacious reasoning in both directions — both inflating and deflating the evidential force of fine-tuning. His work has made the debate more rigorous by clarifying exactly what is and is not surprising about our observations, given various background assumptions.10

Some philosophers have argued that the selection effect completely dissolves the fine-tuning puzzle. If we must observe a life-permitting universe in order to observe anything at all, then the observation of life-permitting constants carries no evidential weight — it is precisely what we should expect regardless of whether the universe was designed or undesigned. Proponents of the fine-tuning argument resist this move, arguing that it commits what Ian Hacking called the “inverse gambler’s fallacy”: reasoning from the fact that an event was observed to the conclusion that it was not improbable. The selection effect constrains what we observe, they contend, but it does not make an improbable event probable.18, 6

Further objections and responses

Beyond the major objections surveyed above, several additional criticisms have been leveled against the fine-tuning argument. One concerns the scope of the design inference. Even if fine-tuning provides evidence for a designer, it does not follow that the designer is the God of traditional theism — an omnipotent, omniscient, perfectly good being. The fine-tuning data, at most, point to an intelligent agent with the power and knowledge to set physical constants. This agent could be a lesser deity, a committee of beings, a natural process in a higher-dimensional space, or something entirely outside the categories of traditional theology. Swinburne acknowledges this limitation but argues that theism is the simplest hypothesis that accounts for the data, invoking Ockham’s razor to favor a single, maximally powerful designer over alternatives.5, 20

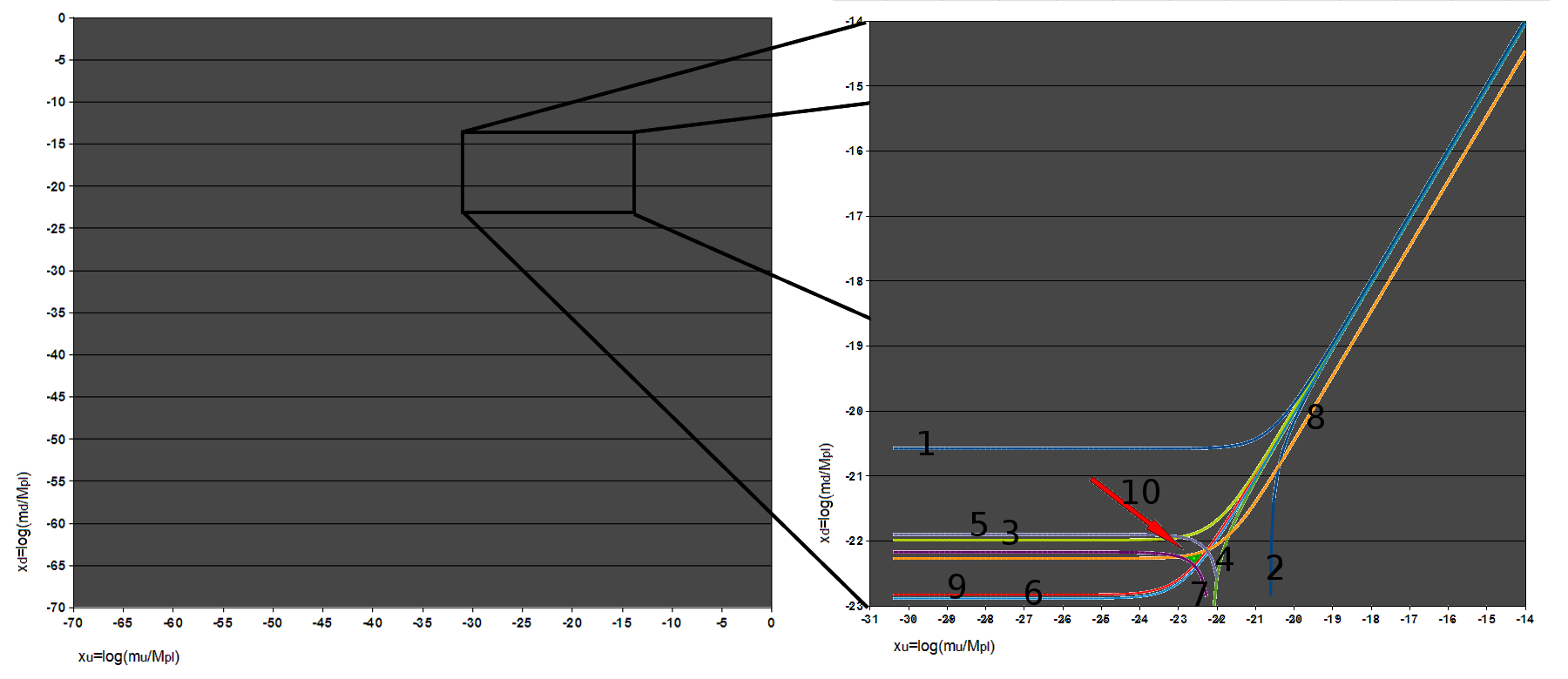

Another objection targets the claim that life-permitting values are a small fraction of the total possible parameter space. Luke Barnes has conducted extensive reviews of the fine-tuning literature and confirms that life-permittingness occupies a small region of parameter space for several constants individually. However, when multiple constants are varied simultaneously, the life-permitting region may be larger or smaller than single-parameter analyses suggest, depending on complex interactions between parameters. The full multi-dimensional parameter space is poorly explored, and claims about the overall fraction of life-permitting possibilities remain uncertain.15

There is also the question of what “life” means in this context. The fine-tuning argument typically assumes that life requires carbon-based chemistry, liquid water, and stable long-lived stars. But these may be parochial assumptions. If life could take radically different forms — silicon-based, plasma-based, or forms that we cannot currently imagine — then the range of life-permitting constants might be far wider than the argument assumes. Proponents respond that while exotic forms of life are conceivable, the fine-tuning argument need not depend on a narrow definition of life: even very broad definitions of “complex organized structure” require constants within narrow ranges, because the issue is the existence of stable atoms, chemistry, and thermodynamic gradients, not carbon specifically.8, 15

Finally, the argument has been challenged on the grounds that it commits a category error by applying engineering concepts (tuning, calibration, design) to fundamental physics. The constants of nature are not dials that were set by anyone; they are features of the mathematical structure of physical law. To speak of them as “fine-tuned” is to import an explanatory framework that presupposes exactly what the argument is trying to establish. Defenders respond that “fine-tuning” is a term of art in physics that predates the philosophical argument and simply refers to the sensitivity of physical outcomes to the values of parameters, without presupposing a tuner.18, 9

Current state of the debate

The fine-tuning argument occupies a distinctive position in contemporary philosophy of religion. Unlike the ontological argument, which many philosophers consider a logical curiosity, or the classical argument from design, which was severely damaged by Darwin’s explanation of biological complexity, the fine-tuning argument engages directly with established results in physics and cosmology. Its empirical basis — the sensitivity of physical outcomes to the values of fundamental constants — is largely uncontroversial among physicists, even those who draw no theistic conclusions from it. The dispute is over what, if anything, the fine-tuning implies.15, 18

Among philosophers of religion, opinion remains sharply divided. Theistic philosophers such as Swinburne, Collins, and Alister McGrath regard fine-tuning as one of the strongest pieces of evidence for God’s existence, particularly when combined with other theistic arguments in a cumulative case. Atheistic and agnostic philosophers tend to favor some combination of the multiverse response, the normalizability objection, and the selection effect critique, though they disagree among themselves about which of these is most compelling.4, 5, 6, 8

Among physicists, the dominant attitude is that fine-tuning is a genuine puzzle that calls for a scientific rather than theological explanation. The multiverse hypothesis, once dismissed as unscientific speculation, has gained significant traction within the physics community, particularly among string theorists and inflationary cosmologists. Susskind, Linde, and Vilenkin have argued that the multiverse is a natural consequence of well-established physical theories, not an ad hoc response to the fine-tuning problem. Others, including Penrose, Ellis, and Paul Steinhardt, have expressed skepticism about the multiverse on both empirical and methodological grounds, arguing that a hypothesis that can accommodate any observation explains nothing.2, 19, 21

The debate is also shaped by open questions in fundamental physics. If a successful theory of quantum gravity is ever developed, it may reveal deeper principles that constrain or determine the values of the constants, potentially dissolving the fine-tuning puzzle entirely. Alternatively, it may confirm the existence of a landscape of possible physical laws, lending further support to the multiverse. Until these questions are resolved, the fine-tuning argument will remain what it has been for several decades: a philosophically provocative inference from real physics, resisted by powerful but not yet decisive objections, and deeply entangled with some of the most fundamental unsolved problems in science.9, 18, 15

References

The Elegant Universe: Superstrings, Hidden Dimensions, and the Quest for the Ultimate Theory