Overview

- Intelligent design (ID) holds that certain features of the natural world — particularly biological systems exhibiting what proponents call irreducible complexity and specified complexity — are best explained by an intelligent cause rather than unguided natural processes, and its principal formulations were developed by Michael Behe and William Dembski in the 1990s.

- ID draws on a long tradition of natural theology stretching from Paley's watchmaker argument through twentieth-century information theory, but it differs from young-earth creationism in accepting an old universe and framing its claims in terms of empirical detection criteria rather than scriptural interpretation.

- Critics raise scientific objections (evolutionary co-option, exaptation, and documented reducible pathways for purportedly irreducible systems), philosophical objections (the argument-from-ignorance structure, the demarcation problem, and the absence of positive predictions), and legal objections, most prominently the 2005 Kitzmiller v. Dover ruling that ID is not science within the meaning of the Establishment Clause.

Intelligent design (ID) is the proposition that certain features of the natural world — particularly biological structures of great complexity — are best explained by an intelligent cause rather than by unguided natural processes such as natural selection and random mutation. The argument occupies a distinctive position within the broader family of teleological arguments: it does not appeal to the fine-tuning of physical constants or to the beauty of natural laws, but instead targets specific biochemical and informational features of living systems and claims that empirical criteria can distinguish designed systems from those produced by chance or necessity.2, 3 The two principal theoretical contributions to ID emerged in the 1990s — Michael Behe's concept of irreducible complexity and William Dembski's concept of specified complexity — and together they form the intellectual core of the movement.

ID has generated sustained debate across philosophy, biology, and law. Proponents argue that it identifies a genuine explanatory gap in evolutionary theory and offers a rigorous detection criterion for the products of intelligence. Critics contend that it relies on an argument from ignorance, that the biological systems it cites as irreducibly complex have plausible evolutionary pathways, and that its claims lack the predictive content required of scientific hypotheses.6, 9 The 2005 federal court ruling in Kitzmiller v. Dover Area School District brought these questions into public view and concluded that ID is not science within the framework of the United States Constitution's Establishment Clause.8 This article examines the historical roots of ID, presents its formal arguments, analyses the scientific and philosophical objections, and assesses its relationship to the broader teleological tradition.

Historical context and natural theology

Intelligent design did not emerge from a vacuum. It draws on a tradition of natural theology that stretches back to antiquity and reached its fullest pre-Darwinian expression in William Paley's Natural Theology (1802). Paley argued that biological organisms exhibit a degree of functional complexity — the optical precision of the eye, the mechanical articulation of the hand, the structural engineering of the skeleton — that far exceeds anything found in human artefacts, and that this complexity requires a designer of correspondingly greater intelligence.1, 14 Paley's watchmaker analogy — if one found a watch on a heath, one would infer a watchmaker from the purposeful arrangement of its parts — became the paradigmatic formulation of the design argument in biology. His reasoning did not merely note that organisms are complex; it argued that the specific fit between structure and function, the arrangement of parts toward the achievement of a purpose, is the signature of intelligent agency.1

Charles Darwin's On the Origin of Species (1859) provided a non-teleological mechanism — natural selection acting on heritable variation — capable of producing the adaptations Paley had catalogued. Over the following century, the neo-Darwinian synthesis integrated Mendelian genetics with population biology, and the design argument in biology fell into disfavour among both scientists and philosophers of science. The argument from cosmic fine-tuning subsequently emerged as the dominant form of teleological reasoning in analytic philosophy of religion, shifting the evidential base from biology to physics.9, 12

Intelligent design represents a return to biology as the evidential base for a design inference, but its proponents have been careful to distinguish it from both Paley's natural theology and young-earth creationism. Unlike Paley, ID proponents do not argue from the general appearance of purpose in nature; they target specific biochemical systems and apply formal probabilistic criteria to assess whether those systems could have arisen without intelligent direction.2, 3 Unlike young-earth creationism, ID does not commit to a literal reading of Genesis, does not dispute the age of the Earth or the universe, and does not identify the designer with the God of any particular religious tradition — at least not as a formal element of the argument.8, 11 Whether this distinction is substantive or merely strategic is itself a matter of dispute, as the Kitzmiller court examined in detail.

Irreducible complexity

Michael Behe, a biochemist at Lehigh University, introduced the concept of irreducible complexity in Darwin's Black Box (1996). Behe defined an irreducibly complex system as "a single system composed of several well-matched, interacting parts that contribute to the basic function, wherein the removal of any one of the parts causes the system to effectively cease functioning."2 The concept targets a specific class of biological structures: those in which multiple components must be present simultaneously for the system to perform its function at all. If such systems cannot function with any part removed, Behe argues, they cannot have been assembled by the gradual, step-by-step process that Darwinian evolution requires, because each intermediate stage — lacking one or more essential parts — would be nonfunctional and therefore invisible to natural selection.2

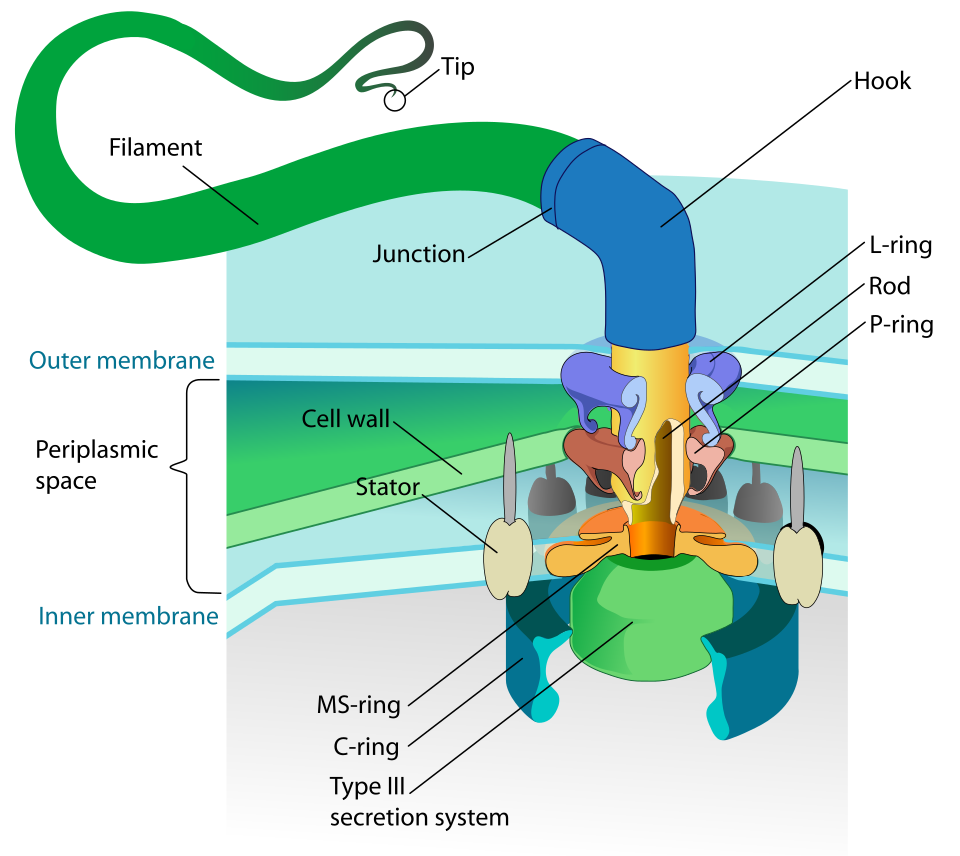

Behe's primary example is the bacterial flagellum, a rotary molecular motor that propels certain bacteria through liquid environments. The flagellum consists of approximately forty protein components, including a basal body embedded in the cell membrane, a hook that acts as a universal joint, and a long helical filament that functions as a propeller. The assembly requires a stator anchored in the cell wall, a rotor driven by the flow of protons across the membrane, a drive shaft, bushings, and an export apparatus that delivers structural proteins to the growing tip of the filament.2, 7 Behe argued that removing any of these components would render the flagellum nonfunctional, that no Darwinian pathway of gradual assembly could account for the simultaneous origin of all necessary parts, and that the system therefore bears the hallmark of intelligent design.2

A second example Behe discussed at length is the blood clotting cascade in vertebrates. The cascade involves a sequence of proteolytic activations in which each clotting factor activates the next in a chain, ultimately converting soluble fibrinogen into insoluble fibrin strands that form a clot. Behe argued that the cascade is irreducibly complex because the removal of any single factor would prevent clot formation entirely, leaving the organism unable to stop bleeding from wounds. He contended that the intricate interdependence of the cascade's components — each precisely calibrated to activate the next without triggering uncontrolled coagulation — defied explanation by a stepwise evolutionary process.2

The rhetorical force of irreducible complexity lies in its challenge to gradualism. Darwinian evolution proceeds by the accumulation of small modifications, each of which must confer a selective advantage (or at least not impose a selective disadvantage) on the organism. If a system genuinely requires all of its parts to function at all, then the intermediate stages in its evolution would have been nonfunctional and therefore could not have been preserved by selection. Behe does not claim that all biological complexity is irreducible; he claims that certain specific systems at the biochemical level are, and that these systems constitute empirical evidence for the intervention of an intelligent agent.2

Specified complexity and the explanatory filter

William Dembski, a mathematician and philosopher, developed a complementary theoretical framework for detecting design. In The Design Inference (1998) and No Free Lunch (2002), Dembski argued that intelligent agency leaves a distinctive empirical signature — specified complexity — and that a systematic method, which he called the explanatory filter, can reliably detect it.3, 4

Dembski's framework rests on a distinction between three types of explanation: necessity (law-like regularity), chance (stochastic processes), and design (intelligent agency). The explanatory filter is a sequential decision procedure. First, one asks whether the event in question is the product of a law-like regularity — whether it had to happen given the relevant natural laws. If the event is not necessitated by law, one asks whether it can be adequately explained by chance — whether it falls within the range of outcomes that stochastic processes are likely to produce. Only if the event is neither necessitated by law nor adequately explained by chance does one infer design.3

The key criterion at the final stage is specified complexity. An event exhibits specified complexity when it is both highly improbable (complex) and conforms to an independently given pattern (specified). Dembski illustrates the distinction with an analogy: an archer shooting an arrow at a large wall and then painting a target around wherever the arrow lands produces a pattern that is specified after the fact but not independently specified; an archer shooting at a target that was painted on the wall before the shot, and hitting the bullseye, produces an outcome that is both improbable and independently specified.3 Biological systems such as the bacterial flagellum, Dembski argues, exhibit this kind of specified complexity: they are astronomically improbable arrangements of matter that also conform to an independently recognisable functional pattern (a working rotary motor), and this combination of improbability and independent specification is, in human experience, reliably produced only by intelligent agents.4

Dembski formulated a universal probability bound of approximately 10−150, calculated by multiplying the estimated number of elementary particles in the observable universe (approximately 1080) by the number of seconds since the Big Bang (approximately 1017) by the maximum number of state transitions per second permitted by quantum mechanics (approximately 1043). Any event whose probability falls below this bound, Dembski argued, exhausts the probabilistic resources of the entire universe and cannot be attributed to chance.4 If such an event also exhibits specification, the explanatory filter yields a design inference.

The formal argument

The central argument of intelligent design can be reconstructed in premise-conclusion form. The following formulation synthesises the core claims of both Behe and Dembski into a single inferential structure:2, 3, 4

P1. Some biological systems exhibit specified complexity — they are both highly improbable and conform to an independently given functional pattern.

P2. Specified complexity is reliably produced only by intelligent agents; unguided natural processes (necessity and chance) do not produce specified complexity.

C. Therefore, an intelligent agent is the best explanation for those biological systems.

The argument is abductive: it claims that design is the best available explanation for a class of observed phenomena, not that design is logically entailed by the premises. It is an inference to the best explanation, and its strength depends on whether the premises are true and whether the inference is warranted.

P1 is an empirical claim. Its defence rests on the detailed case studies presented by Behe (the bacterial flagellum, the blood clotting cascade, the immune system) and on Dembski's probabilistic calculations, which aim to show that the probability of these systems arising by unguided processes falls below the universal probability bound.2, 4 The premise is contested by biologists who argue that the systems in question have plausible evolutionary pathways and are not, in the relevant sense, specified in a way that excludes natural explanation.

P2 is a generalisation from human experience. In every known case where specified complexity has been traced to its source — in language, technology, software, architecture — that source has been an intelligent agent.3 Critics challenge this premise on the ground that it assumes what it sets out to prove: by defining specified complexity in terms of the outputs of intelligence, the framework may classify the products of evolution as designed by definitional fiat rather than by empirical discovery.6

If both premises are true and the inference is sound, the conclusion follows that an intelligent agent is the best explanation for the biological systems in question. The argument does not identify the designer, does not specify the mechanism of design, and does not claim that all biological complexity is designed — only that certain systems bear a signature that, by Dembski's criteria, warrants a design inference.3, 4

Scientific objections

The scientific case against intelligent design centres on the claim that the biological systems cited as irreducibly complex have plausible evolutionary histories, and that the concept of irreducible complexity rests on a misunderstanding of how evolution builds complex structures.

The most extensively discussed example is the bacterial flagellum. Kenneth Miller, a cell biologist at Brown University, pointed out that a subset of approximately ten flagellar proteins is homologous to the components of the type III secretion system (T3SS), a needle-like apparatus used by certain pathogenic bacteria to inject toxins into host cells.5 The T3SS is a functional molecular machine in its own right, performing a useful biological role (protein secretion) with a smaller set of components than the complete flagellum. Miller argued that the T3SS demonstrates that a subset of the flagellar components can perform a function other than propulsion, and that the flagellum could therefore have evolved by the co-option of a pre-existing secretion system, with additional components recruited incrementally to produce rotary motility.5

Subsequent phylogenetic analysis by Abby and Rocha (2012) provided evidence that the non-flagellar T3SS actually evolved from the flagellum rather than the reverse, indicating that the flagellar apparatus is the ancestral structure and the secretion system is a derivative.10 This finding complicates Miller's specific historical reconstruction but does not undermine the broader evolutionary point: the research demonstrates that components of complex molecular machines can be co-opted for different functions, that functional subsystems can be derived from larger systems by deletion and modification, and that the evolutionary history of such systems involves extensive modular rearrangement rather than the simultaneous assembly of all parts.10

The general evolutionary mechanism at work is exaptation — the process by which a structure that originally evolved for one function is co-opted for a different function. Exaptation is well documented throughout evolutionary biology: feathers originally evolved for thermoregulation or display and were later co-opted for flight; the bones of the mammalian middle ear evolved from jaw bones in reptilian ancestors; the swim bladder of bony fish evolved from a structure used for respiration.5 If the components of an irreducibly complex system each served a different function before being recruited into the current system, then the system need not have been assembled all at once; it could have been built incrementally from pre-existing parts, each of which was maintained by selection for its original function.5, 6

Behe's claim about the blood clotting cascade has also been challenged on empirical grounds. Comparative genomic studies have shown that several components of the mammalian clotting cascade are absent in other vertebrate lineages — puffer fish, for example, lack several clotting factors present in mammals — yet these organisms clot their blood effectively with a simpler version of the cascade.5 This suggests that the mammalian cascade was assembled incrementally, with new factors added to an ancestral system that functioned with fewer components, directly contradicting the claim that the removal of any single factor renders the system nonfunctional.

A further line of critique targets the concept of reducible complexity. Even if a system is irreducibly complex in its current form — even if removing any part now renders it nonfunctional — it does not follow that the system was always irreducibly complex. A system can become irreducibly complex through a process in which components are added as enhancements and then become essential as the system adapts to depend on them, much as a scaffold is necessary during the construction of an arch but can be removed once the keystone is in place. The arch is "irreducibly complex" in that removing the keystone causes collapse, yet it was built incrementally with the aid of removable supports.5, 6

Philosophical objections

The philosophical critique of intelligent design is distinct from the scientific critique and targets the logical structure of the argument rather than the truth of its empirical premises.

The most prominent philosophical objection is that ID constitutes an argument from ignorance — an inference that moves from the absence of a known natural explanation to the conclusion that no natural explanation exists, and thence to the positive conclusion that an intelligent agent is responsible. Dembski's explanatory filter explicitly operates by elimination: design is inferred only after necessity and chance have been ruled out. Critics argue that this structure makes the design inference parasitic on the current state of scientific knowledge. If a natural pathway for the bacterial flagellum is unknown today, the filter yields a design inference; if a pathway is discovered tomorrow, the inference is retracted. The design conclusion is therefore not a discovery about the world but a placeholder for scientific ignorance, and it retreats as scientific knowledge advances.6, 11

Dembski has responded to this objection by arguing that the explanatory filter does not merely rely on the absence of a known explanation but on a positive detection criterion — specified complexity — that provides evidential warrant for a design inference independent of what is currently unknown. The specified complexity of a biological system, Dembski contends, is an objective feature of the system itself, not a subjective assessment of our current inability to explain it.3, 4 Critics reply that the objectivity of specification has not been demonstrated: the patterns Dembski identifies as independently given (such as "rotary motor" or "clotting cascade") are functional descriptions imposed by the observer, and it is not clear that the universe comes pre-labelled with specifications that can serve as detection criteria for design.6

A second philosophical objection concerns the demarcation problem — the question of what distinguishes science from non-science. The demarcation problem is itself philosophically contentious, and no single criterion has achieved universal acceptance among philosophers of science. Falsifiability, as proposed by Karl Popper, is one candidate criterion, and critics of ID have argued that the design hypothesis is unfalsifiable because ID does not specify the designer's identity, intentions, methods, or constraints, and therefore generates no testable predictions about what the designer would or would not create.6, 11 Sober has articulated a related but more precise objection: the design hypothesis, taken on its own, predicts nothing about the biological world unless it is supplemented with auxiliary hypotheses about what the designer intended. Without independent evidence about the designer's goals and capacities, the hypothesis "an intelligent agent designed this system" is compatible with any observation whatsoever, and a hypothesis that is compatible with any observation has no evidential content.6

Proponents of ID have responded that many scientific hypotheses are compatible with a wide range of observations and require auxiliary assumptions to generate specific predictions — this is not unique to design. Dembski has also argued that the demand for positive predictions mischaracterises the structure of the argument: ID is not a mechanistic theory that predicts specific outcomes but a detection framework that identifies the products of intelligence after the fact, analogous to forensic science or the Search for Extraterrestrial Intelligence (SETI), which also infer intelligent agency from patterns in observed data without specifying the intentions of the agent in advance.4

A third objection holds that the ID argument is essentially negative — it argues against the adequacy of natural explanations rather than for a positive account of how design operates. The argument does not specify when the designer acted, by what mechanism the design was implemented, or what the designer's goals were. It does not predict which biological systems should be designed and which should not. In this respect, critics argue, ID lacks the explanatory depth expected of a productive theory: it identifies a purported phenomenon (design) without offering any account of the process by which design is implemented in the natural world.6, 11

Legal context: Kitzmiller v. Dover

The legal status of intelligent design in American public education was addressed in Kitzmiller v. Dover Area School District, decided on December 20, 2005, by Judge John E. Jones III of the United States District Court for the Middle District of Pennsylvania. The case arose after the Dover Area School Board in York County, Pennsylvania, adopted a policy in October 2004 requiring that ninth-grade biology students hear a statement presenting intelligent design as an alternative to evolution and referring them to the supplementary textbook Of Pandas and People.8

In a 139-page opinion, Judge Jones ruled that the school board's policy violated the Establishment Clause of the First Amendment. The court's analysis proceeded on three grounds. First, the court examined the historical relationship between ID and creationism, tracing the development of Of Pandas and People through successive drafts in which the word "creationism" was systematically replaced with "intelligent design" following the Supreme Court's 1987 decision in Edwards v. Aguillard, which struck down the teaching of "creation science" in public schools. The court found that this textual history demonstrated that ID is "creationism re-labeled."8

Second, the court evaluated whether intelligent design qualifies as science. After hearing testimony from scientific and philosophical experts on both sides, Judge Jones concluded that ID "violates the centuries-old ground rules of science by invoking and permitting supernatural causation"; that it relies on the same flawed argument — an argument from ignorance — that doomed creation science; that its negative arguments against evolution have been refuted by the scientific community; and that it has not generated peer-reviewed research, empirical testing, or scientific publication commensurate with a scientific theory.8

Third, the court found that the school board members who adopted the policy were motivated by religious purposes rather than genuine pedagogical concerns. Internal communications and public statements by board members revealed an explicit intention to promote religious belief through the biology curriculum.8

The Kitzmiller decision is a trial-court ruling and therefore does not set binding precedent beyond the Middle District of Pennsylvania. It has not been appealed or overturned, and no comparable case has subsequently reached a federal appellate court or the Supreme Court. The ruling did, however, have a substantial practical effect: no other public school district in the United States has subsequently adopted a policy requiring the teaching of intelligent design in science classes.8

Responses to objections

Proponents of intelligent design have offered responses to each of the major categories of objection. The adequacy of these responses is itself disputed, and the exchange between proponents and critics illuminates the philosophical structure of the debate.

In response to the scientific objections regarding evolutionary pathways for the bacterial flagellum, Behe has argued that demonstrating homology between the flagellum and the T3SS does not constitute a Darwinian explanation. Homology establishes that two systems share common components, but it does not demonstrate how one system was transformed into the other through a series of functional intermediates, each preserved by natural selection. The challenge, Behe contends, is not merely to identify components that appear in both systems but to specify a detailed, step-by-step evolutionary pathway in which each intermediate stage is functional and selectively advantageous.2 Critics reply that this demand for a complete step-by-step pathway sets an unreasonably high evidential standard that is not met by most accepted accounts in evolutionary biology, where historical reconstructions are necessarily incomplete because the intermediate organisms are extinct and the fossil record at the molecular level is sparse.5

In response to the argument-from-ignorance objection, Dembski has maintained that the explanatory filter is not an argument from ignorance but a positive empirical detection method. The filter does not merely note the absence of a known natural cause; it identifies the presence of a positive signature — specified complexity — that is, in human experience, invariably associated with intelligent agency. Dembski draws an analogy to the methods of archaeology and forensic science, where artefacts and crime scenes are attributed to intelligent agency on the basis of patterns that natural processes do not produce, without requiring knowledge of the specific agent or mechanism involved.3, 4 Sober has responded that the analogy is misleading: in forensic science and archaeology, the investigators have background knowledge about the kinds of agents involved (humans with known capacities and motives), whereas the intelligent designer posited by ID is an agent of unspecified nature, and without background knowledge of the designer's intentions, the design hypothesis generates no specific expectations about what the designed products should look like.6

In response to the demarcation objection, some ID proponents have questioned whether the demarcation problem itself has a solution. If no clear criterion distinguishes science from non-science — and the philosophical literature on demarcation is extensive and unresolved — then the charge that ID is "not science" carries less force than it appears to, since the boundary being invoked is itself contested.11 Larry Laudan, a philosopher of science who is not an ID proponent, has argued that the demarcation problem is intractable and that the question of whether a theory is "scientific" is less important than whether its claims are well-supported by evidence.11 Critics of ID acknowledge the difficulty of the demarcation problem but maintain that, whatever the precise boundary of science, ID falls on the wrong side of it because it invokes an unobservable, untestable agent and produces no novel empirical predictions.6

Relationship to broader teleological arguments

Intelligent design occupies a specific niche within the broader family of teleological arguments. It shares with classical design arguments the inference from biological complexity to intelligent causation, and it shares with the fine-tuning argument the claim that certain features of the natural world bear the signature of purposeful arrangement. But it differs from both in important respects.

The fine-tuning argument operates at the level of fundamental physics, arguing that the values of the physical constants and initial conditions of the universe are calibrated within narrow ranges compatible with the existence of complex structures and life. The fine-tuning argument does not claim that evolution cannot produce biological complexity; it claims that the very laws and conditions under which evolution operates require explanation, and that intelligent design of the cosmic parameters is the best such explanation.12, 9 Intelligent design, by contrast, operates at the level of biology and claims that evolution cannot produce certain specific biological structures — a much stronger and more empirically vulnerable claim.

Richard Swinburne's teleological argument, developed within a Bayesian framework, treats the orderliness of the universe — the existence of simple, universal natural laws — as evidence for a rational cosmic designer. Swinburne's argument does not depend on the failure of any particular scientific explanation; it argues that the existence of laws as such calls for a higher-level explanation that science cannot provide from within its own framework.12 ID, by contrast, makes claims that are directly testable against the findings of molecular biology and evolutionary genetics, and its fortunes are therefore tied to the progress of those sciences in a way that Swinburne's cosmic teleological argument is not.

Graham Oppy has noted that the relationship between ID and other teleological arguments is a source of both strength and vulnerability for the teleological tradition as a whole. If ID's biological claims are empirically refuted, the refutation does not extend to the fine-tuning argument or to Swinburne's argument from laws, which operate on entirely different evidential bases. Conversely, the success of the fine-tuning argument would not vindicate ID's specific biological claims.13 The teleological tradition is thus a family of arguments that stand or fall independently, united by a common inferential pattern — from order to intelligence — but differing in their premises, their vulnerability to objections, and their relationship to empirical science.

ID claims and scientific responses

The following table summarises the principal claims of intelligent design and the corresponding scientific and philosophical responses that have been advanced in the literature.2, 5, 6, 10

Intelligent design claims and responses2, 5, 6

| ID claim | Scientific or philosophical response |

|---|---|

| The bacterial flagellum is irreducibly complex and cannot have evolved gradually | Homologous subsystems (e.g., T3SS) perform different functions with fewer components; co-option and exaptation provide evolutionary pathways |

| The blood clotting cascade requires all components to function | Comparative genomics shows simpler cascades in other vertebrates (e.g., puffer fish) that function with fewer factors |

| Specified complexity is produced only by intelligent agents | Natural selection operating on variation produces functional complexity; the premise may beg the question by defining the signature of design in terms of the outputs of intelligence |

| The explanatory filter reliably detects design | The filter rests on elimination of known natural causes, making it an argument from ignorance vulnerable to future scientific discovery |

| ID is distinct from creationism | The textual history of Of Pandas and People shows systematic replacement of "creationism" with "intelligent design" after Edwards v. Aguillard (1987) |

| ID makes no claim about the identity of the designer | This agnosticism deprives the hypothesis of predictive content: without knowing the designer's intentions, ID generates no testable expectations |

| The universal probability bound (10−150) establishes that chance cannot explain biological complexity | The bound conflates the probability of a specific outcome with the probability of any functional outcome; the relevant probability space is not well defined |

The table illustrates the point-counterpoint structure of the ID debate. Each claim advanced by proponents has generated a specific response, and each response has in turn elicited further rejoinders. The exchange continues to be examined in the philosophy of science, philosophy of biology, and philosophy of religion, where the logical structure of design inferences, the evidential standards appropriate to historical sciences, and the relationship between scientific explanation and metaphysical interpretation remain active areas of inquiry.9, 13

Contemporary assessment

Assessing intelligent design requires distinguishing between its logical structure, its empirical content, and its philosophical implications. As a logical structure, the core argument — if specified complexity is found in biological systems, and if specified complexity is reliably produced only by intelligent agents, then an intelligent agent is the best explanation — is a valid instance of inference to the best explanation. The question is whether the premises are true and whether the inference is warranted given the available evidence.3, 6

The empirical status of P1 is contested. Behe's case studies have been challenged by detailed scientific work on the evolutionary history of the systems he cites, and the concept of irreducible complexity has been shown to be compatible with gradual evolutionary assembly through co-option, exaptation, and scaffold-dependent construction. The claim that specific biological systems cannot have evolved through natural processes is an empirical claim that must be evaluated against the evidence from comparative genomics, structural biology, and evolutionary genetics, and the weight of that evidence has shifted against the irreducibility claims since Darwin's Black Box was published in 1996.5, 10

The status of P2 involves both empirical and conceptual questions. The empirical question is whether specified complexity is in fact found only in the products of intelligent agents. The conceptual question, raised by Sober and others, is whether the concept of specification is sufficiently well defined to serve as an objective detection criterion, or whether it covertly imports the observer's functional categories into a framework that claims to discover design rather than impose it.6

The philosophical significance of ID extends beyond the question of its truth or falsity. The debate has clarified important questions about the nature of scientific explanation, the standards of evidence appropriate to historical sciences, and the relationship between methodological naturalism — the commitment to seeking natural explanations for natural phenomena — and metaphysical naturalism — the philosophical position that nothing beyond the natural world exists.11 ID proponents argue that methodological naturalism is an arbitrary restriction that prevents science from following the evidence wherever it leads. Critics respond that methodological naturalism is not an arbitrary rule but a defining feature of scientific inquiry, without which the category of "scientific explanation" loses its content and any phenomenon can be attributed to an unobservable agent.6, 11

Whether intelligent design constitutes a productive research programme, a legitimate philosophical position outside the domain of empirical science, or a religiously motivated challenge to evolutionary biology remains a matter of active dispute. What is clear is that the debate has forced a more careful examination of the concepts of complexity, information, design detection, and the proper boundaries of scientific explanation — questions that belong as much to philosophy as to biology, and that continue to repay careful analysis regardless of one's assessment of the ID movement's specific claims.9, 13

References

Finding Darwin's God: A Scientist's Search for Common Ground between God and Evolution

The Flagellar Motor of Caulobacter crescentus Generates More Torque When a Cell Swims Backwards

The Non-Flagellar Type III Secretion System Evolved from the Bacterial Flagellum and Diversified into Host-Cell Adapted Systems