Overview

- Philosophical cosmology examines the intersection of modern astrophysics with ancient questions about the origin, structure, and purpose of the universe, spanning traditions from Aristotle and Aquinas to contemporary debates over fine-tuning and the multiverse.

- Discoveries such as the Big Bang, the fine-tuning of physical constants, and the cosmic horizon have revived classical philosophical questions about whether the universe requires an external cause, whether its life-permitting properties demand explanation, and whether science alone can address ultimate 'why' questions.

- The field remains genuinely contested: theistic, naturalistic, and agnostic positions each claim support from the same physical evidence, and the debate hinges as much on philosophical assumptions about explanation, probability, and the scope of science as on empirical data.

Philosophical cosmology is the branch of inquiry that addresses the deepest questions raised by the study of the universe as a whole: why anything exists at all, whether the cosmos had a beginning or has persisted eternally, whether its apparent order and life-permitting structure require explanation, and whether empirical science can in principle resolve such questions or whether they lie beyond its reach. These questions are as old as philosophy itself, but they have been transformed in the modern era by the discoveries of observational cosmology, above all the expansion of the universe, the cosmic microwave background, and the extraordinary precision of the physical constants that govern the behaviour of matter and energy.18

The subject sits at the intersection of physics, metaphysics, and theology. It draws contributors from all three disciplines, and the resulting debates are shaped as much by differing assumptions about the nature of explanation, the scope of scientific method, and the meaning of probability as by the empirical data themselves. This article surveys the major themes of philosophical cosmology from its ancient origins to the contemporary controversies over fine-tuning, the anthropic principle, cosmological arguments for a first cause, and the multiverse hypothesis. It aims to present theistic, naturalistic, and agnostic perspectives on each question with equal fidelity, noting where genuine consensus exists and where the disagreement is irreducibly philosophical.

Ancient and medieval cosmological thought

Philosophical reflection on the cosmos long predates the scientific method. The pre-Socratic philosophers of ancient Greece were among the first thinkers to seek naturalistic explanations for the origin and structure of the world. Thales of Miletus proposed that water was the fundamental substance underlying all things, while Anaximander posited an indefinite or boundless principle (the apeiron) from which all worlds arise and into which they return. These early cosmogonies were philosophical rather than empirical, but they established the enduring pattern of seeking a unifying principle behind the apparent diversity of nature.6

Plato's Timaeus, composed in the fourth century BCE, presented the universe as the work of a divine craftsman (the Demiurge) who imposed rational order on pre-existing matter according to eternal mathematical forms. Aristotle, by contrast, argued in the Physics and Metaphysics for an eternal universe set in motion by an Unmoved Mover, a necessary being whose existence explains why change occurs at all. Aristotle's cosmology dominated Western thought for nearly two millennia and profoundly shaped the medieval synthesis of philosophy and theology.6

In the medieval Islamic tradition, theologians of the kalām school developed arguments that the universe must have had a temporal beginning, on the grounds that an actually infinite series of past events is impossible. This reasoning was later taken up and refined by Thomas Aquinas in his Summa Theologiae (c. 1265–1274), where he presented five arguments for the existence of God. Three of these, the arguments from motion, efficient causation, and contingency, are forms of what is now called the cosmological argument: each proceeds from a general feature of the observable world to the existence of a first cause or necessary being that is not itself contingent on anything else.1 Aquinas was careful to distinguish his philosophical arguments from the theological doctrine of creation ex nihilo, maintaining that reason alone could not demonstrate whether the universe had a temporal beginning but could demonstrate the existence of a sustaining cause.

The emergence of scientific cosmology

The transition from philosophical speculation to observational cosmology began in the early twentieth century. In 1927, the Belgian physicist and Catholic priest Georges Lemaître derived from Einstein's general relativity a model of an expanding universe and proposed that the expansion, if extrapolated backward in time, implied an initial state of extreme density, which he later called the "primeval atom."3

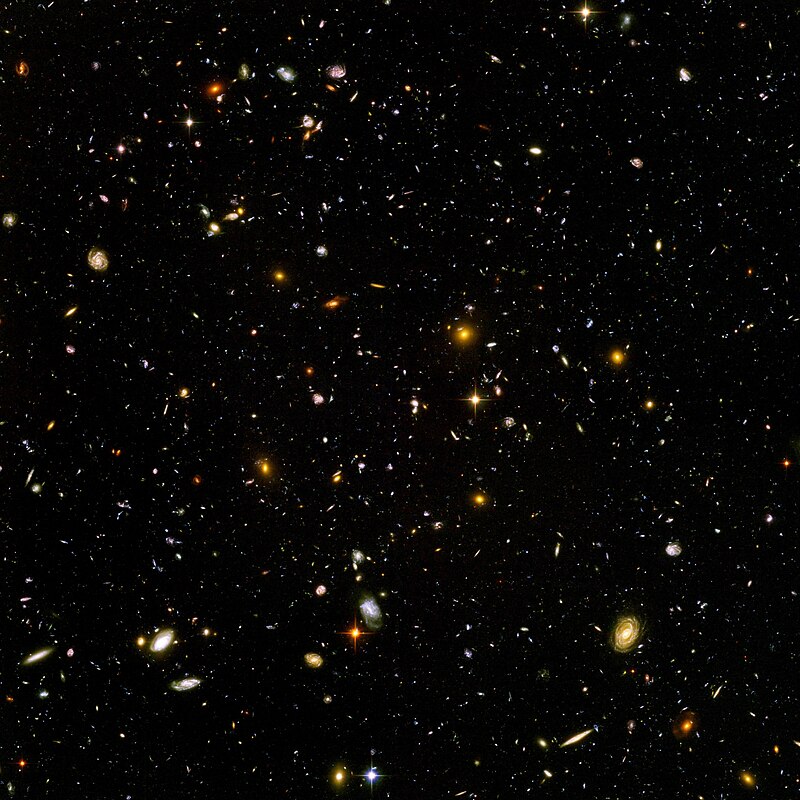

Edwin Hubble's 1929 observations of the recession of distant galaxies confirmed that the universe is indeed expanding, providing the first empirical foundation for what would become the Big Bang model.

The philosophical implications were immediately apparent and deeply contested. Some physicists embraced the convergence of the new cosmology with the traditional doctrine of creation. Pope Pius XII, in a 1951 address to the Pontifical Academy of Sciences, declared that the Big Bang confirmed the opening words of Genesis. Others resisted any such association. Fred Hoyle, Hermann Bondi, and Thomas Gold proposed the steady-state model in 1948, in which the universe has no beginning and continuously generates new matter to maintain a constant average density as it expands.4 Hoyle, an atheist, later acknowledged that his philosophical preferences had partly motivated the steady-state hypothesis, and it was Hoyle who coined the term "Big Bang" as a dismissive label in a 1949 BBC radio broadcast. The discovery of the cosmic microwave background in 1965 decisively favoured the Big Bang model over the steady state, but the philosophical questions it raised about cosmic origins remain very much alive.18

Lemaître himself urged caution about drawing theological conclusions from physics. He insisted that his primeval-atom hypothesis was a scientific theory, not a proof of creation, and that the initial singularity predicted by general relativity should not be equated with the divine act described in Genesis.3 This distinction between the physical concept of a cosmic beginning and the metaphysical concept of creation remains central to philosophical cosmology.

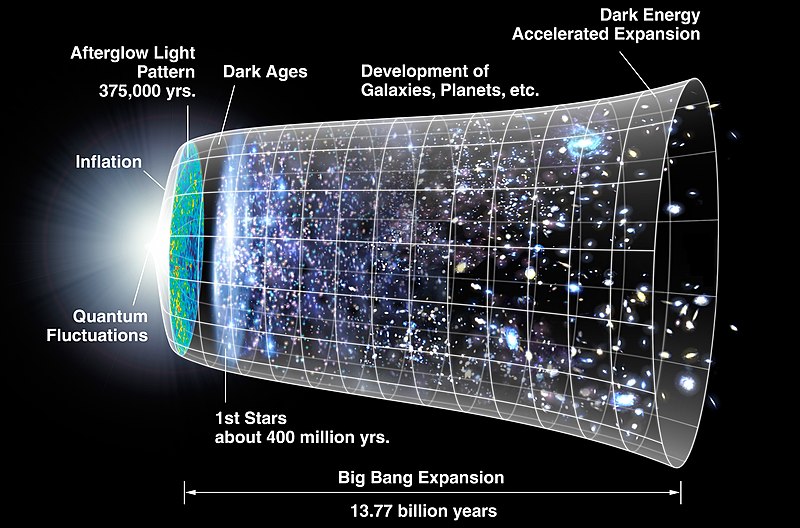

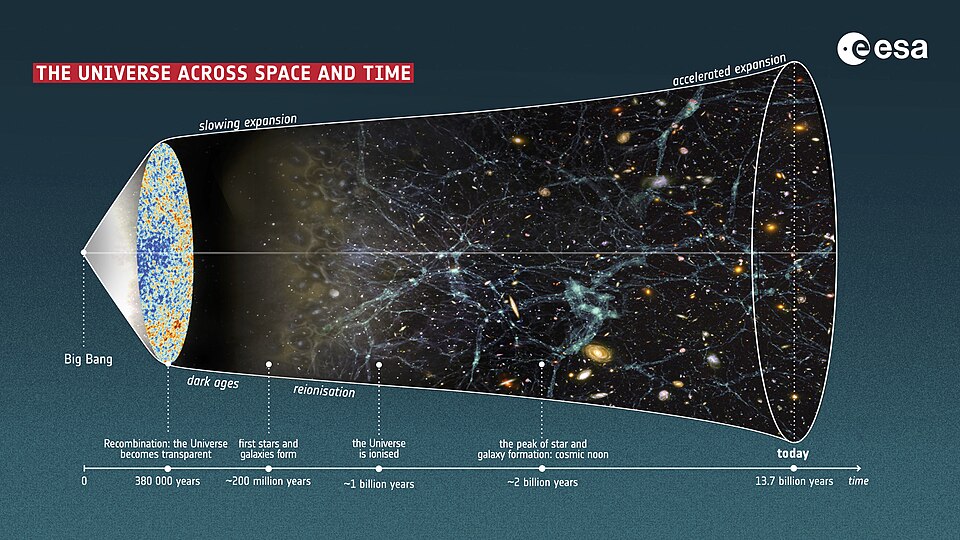

The Big Bang and the question of a beginning

The standard Big Bang model, combined with the Planck satellite's measurements of the cosmic microwave background, establishes that the observable universe has been expanding and cooling for approximately 13.8 billion years from an initial state of extraordinarily high temperature and density.24 In the classical formulation of general relativity, this expansion traces back to an initial singularity at which the density, temperature, and curvature of spacetime become infinite, and the equations of physics break down. Whether this mathematical singularity represents an actual physical beginning of the universe or merely the boundary of our current theoretical understanding is one of the central questions in philosophical cosmology.

Several proposals in theoretical physics attempt to describe the earliest moments of the cosmos without invoking a true singularity. The Hartle-Hawking no-boundary proposal, published in 1983, applies quantum cosmology to suggest that spacetime is finite but has no boundary in the past: time as we know it emerges gradually from a quantum state in which the distinction between time and space dissolves.10 Stephen Hawking argued that this proposal rendered the question of a creator unnecessary, since there was no initial moment at which creation could have occurred. Critics have responded that the no-boundary proposal does not eliminate the metaphysical question of why there exists a quantum state of this kind rather than nothing at all, and that eliminating a temporal boundary is not the same as eliminating the need for an ontological ground of existence.19

The Borde-Guth-Vilenkin (BGV) theorem, published in 2003, demonstrates that any universe which has, on average, been expanding throughout its history cannot be infinite in the past but must possess a past spacetime boundary.14 This result applies broadly across inflationary cosmologies and does not depend on any specific energy condition. The theorem has been cited by proponents of cosmological arguments as evidence that the universe had an absolute beginning, while other physicists caution that the theorem identifies a boundary of the classical spacetime description rather than an absolute origin, and that a complete theory of quantum gravity may describe what lies beyond that boundary.14, 19

Lawrence Krauss has argued that modern quantum cosmology shows how a universe can arise "from nothing," where "nothing" is understood as the quantum vacuum, a state with no particles but governed by the laws of quantum field theory.23 Philosophers and physicists alike have criticized this use of "nothing," noting that the quantum vacuum is a highly structured physical state governed by pre-existing physical laws and is therefore not "nothing" in the metaphysical sense that the cosmological question has traditionally intended.12 The disagreement illustrates a recurring pattern in philosophical cosmology: the same physical result can be interpreted as either resolving or deepening the question of why the universe exists.

Fine-tuning of physical constants

One of the most striking features of modern physics is the apparent fine-tuning of the fundamental constants and initial conditions of the universe for the existence of complex structures and, ultimately, life.

The term "fine-tuning" refers to the observation that if certain physical parameters were even slightly different from their actual values, the universe would be radically inhospitable to any form of organised complexity. This is an empirical claim about the sensitivity of physical outcomes to parameter values, and it is acknowledged by physicists across the philosophical spectrum, from those who see it as evidence for design to those who regard it as a selection effect requiring no further explanation.22

The cosmological constant, which governs the rate of the universe's accelerating expansion, provides the most frequently cited example. Quantum field theory predicts a vacuum energy density roughly 120 orders of magnitude larger than the observed value. If the cosmological constant were even a few orders of magnitude greater than its measured value, the universe would have expanded too rapidly for galaxies, stars, or planets to form; if it were large and negative, the universe would have recollapsed almost immediately.7, 8 In 1987, Steven Weinberg used anthropic reasoning to predict that the cosmological constant, if nonzero, should be small enough to permit the formation of gravitationally bound structures but not dramatically smaller than this bound. The subsequent discovery of the accelerating expansion of the universe in 1998 confirmed a nonzero cosmological constant within the range Weinberg had predicted, a result that some regard as a significant success for anthropic reasoning.7

Other examples of apparent fine-tuning include the strength of the strong nuclear force, which if altered by a few percent would prevent the formation of carbon or oxygen in stellar nucleosynthesis; the ratio of the electromagnetic force to gravity, which determines the stability of stars; the density parameter of the early universe, which had to be extraordinarily close to the critical value for the universe to avoid either immediate recollapse or excessively rapid expansion; and the low-entropy initial conditions of the Big Bang, which Roger Penrose has calculated would occur by chance with a probability of roughly one in 1010123.11, 17, 22

Sensitivity of selected physical parameters to life-permitting values11, 22

The fact of fine-tuning is largely uncontested among physicists; what is contested is its significance. Three broad interpretive frameworks dominate the debate. The first holds that fine-tuning is evidence for a designer or purposive intelligence behind the laws of physics. The second invokes a multiverse of vast or infinite extent, in which all possible values of the constants are realised somewhere, and observers inevitably find themselves in life-permitting regions. The third maintains that fine-tuning is simply a brute fact about the universe that requires no further explanation, or that future physics will reveal deeper principles from which the values of the constants can be derived.9, 22

The anthropic principle and its variants

The anthropic principle, in its most general form, is the observation that the properties of the universe must be compatible with the existence of observers who measure those properties. The concept was given its modern formulation by the physicist Brandon Carter in a 1974 lecture at a symposium honouring the 500th anniversary of the birth of Copernicus. Carter distinguished between a weak and a strong form of the principle.5

The weak anthropic principle (WAP) states that the observed values of physical and cosmological quantities are restricted by the requirement that carbon-based life can evolve and that the universe be old enough for this to have occurred. This is essentially a selection effect: we should not be surprised to observe conditions compatible with our existence, because we could not observe anything otherwise. Most physicists regard the WAP as a straightforward tautology with limited but genuine explanatory power, particularly when combined with a framework in which the relevant conditions vary across different regions or epochs.5, 6

The strong anthropic principle (SAP) makes the stronger claim that the universe must have those properties which allow life to develop within it at some stage in its history. In the formulation given by Barrow and Tipler in their 1986 treatise The Anthropic Cosmological Principle, the SAP comes in several versions, some of which shade into metaphysics. One version asserts that the constants of nature are constrained to take values that permit observers; another, more speculative version, the "final anthropic principle," asserts that intelligent information-processing must come into existence in the universe, and once it does, it will never die out.6 This last formulation has been widely criticised as teleological speculation unsupported by physics.

The anthropic principle has been invoked as an explanatory tool in theoretical physics, most notably in Weinberg's 1987 prediction of the cosmological constant. If the cosmological constant varies across a multiverse ensemble, anthropic selection ensures that observers will only measure values small enough to permit structure formation, yielding an expected value close to the anthropic upper bound. The subsequent observational confirmation of a small but nonzero cosmological constant has been cited as evidence that anthropic reasoning can generate testable predictions.7 Critics respond that the explanatory power of such reasoning depends entirely on the assumed existence of a multiverse, which is itself unobserved, and that the prediction is consistent with but does not uniquely require anthropic selection.21

Cosmological arguments for a first cause

Cosmological arguments for the existence of God have a long philosophical pedigree, extending from Aristotle's Unmoved Mover through the medieval Islamic kalām and the Thomistic "Five Ways" to contemporary analytic philosophy of religion. These arguments share a common structure: they begin with some general feature of the world, such as motion, causation, or contingency, and argue that this feature ultimately requires a necessary, uncaused, or self-existent ground of explanation.1

The kalām cosmological argument, revived and defended in modern analytic philosophy primarily by William Lane Craig, takes the following form: (1) everything that begins to exist has a cause; (2) the universe began to exist; therefore (3) the universe has a cause. Craig and James Sinclair have marshalled both philosophical arguments against the possibility of an actually infinite past and scientific evidence, including the BGV theorem and the thermodynamic properties of the universe, in support of premise (2).14, 19 They argue that the cause of spacetime, matter, and energy must itself be non-physical, timeless, and enormously powerful, properties traditionally ascribed to God.

The Leibnizian cosmological argument, which proceeds from the principle of sufficient reason rather than from the finitude of the past, has also received extensive contemporary development. In Leibniz's formulation, the question is not merely why the universe began but why it exists at all: "Why is there something rather than nothing?" Even an eternal universe, on this view, would require an explanation for its existence unless it exists necessarily, and the physical universe does not appear to be a necessary being. Richard Swinburne has developed a Bayesian version of this argument, contending that the existence of a single, omnipotent, and omniscient God is a simpler and therefore more probable explanation of the existence and order of the universe than any alternative hypothesis.16

Critics have challenged cosmological arguments on multiple fronts. David Hume argued in the eighteenth century that the principle of causation cannot be extended beyond the realm of experience to the universe as a whole, and that positing a necessary being raises the question of what makes that being necessary.2 Adolf Grünbaum has argued that the Big Bang singularity does not constitute the kind of "coming into existence" that requires a causal explanation, since there is no prior state of nothingness from which the universe emerged; there is simply no time "before" the Big Bang.12 Others have questioned whether the concept of causation is coherent in the absence of a pre-existing temporal framework, or whether the argument commits a fallacy of composition in reasoning from the causal structure of events within the universe to the need for a cause of the universe itself.2, 12

The multiverse hypothesis

The multiverse hypothesis proposes that the observable universe is one member of an enormously large, perhaps infinite, ensemble of universes, each potentially governed by different physical constants, initial conditions, or even different laws of physics. The idea has roots in several independent areas of theoretical physics. Inflationary cosmology, in its "eternal inflation" variant, predicts that the exponential expansion of space produces an unbounded number of causally disconnected regions, each of which may have different low-energy physics. String theory, with its estimated 10500 or more possible vacuum configurations, the so-called "string landscape," provides a theoretical mechanism by which the constants of nature could vary from one region to another.13, 15

Max Tegmark has proposed a four-level classification of multiverse hypotheses, ranging from regions of space beyond our cosmic horizon (Level I), through regions with different physical constants produced by eternal inflation (Level II), to the many branches of the quantum wave function (Level III), to the radical proposal that every self-consistent mathematical structure has physical existence (Level IV).15 Each level involves progressively more speculative extrapolation from established physics.

The multiverse is philosophically significant because, if it exists, it provides a naturalistic explanation for fine-tuning that does not invoke design. If all possible values of the constants are realised somewhere, then the anthropic principle reduces to a selection effect: we observe a life-permitting universe because we could not exist in any other kind. Leonard Susskind has argued that the combination of eternal inflation, the string landscape, and anthropic reasoning constitutes the most promising framework for understanding the observed values of the constants, and has explicitly framed this approach as an alternative to intelligent design.13

The multiverse hypothesis has attracted strong criticism from both scientific and philosophical quarters. The physicist George Ellis has argued that the existence of other universes is in principle unobservable and therefore lies outside the domain of empirical science, making multiverse proposals unfalsifiable in the Popperian sense.18 The philosopher John Leslie has observed that the multiverse and the design hypothesis are not necessarily exclusive alternatives: if a designer existed, such a being might have created a multiverse, and if a multiverse exists, the question of why the multiverse-generating mechanism has the specific properties it does remains unanswered.9 Victor Stenger, by contrast, has argued that fine-tuning itself is overstated, that many of the claimed sensitivities depend on varying only one parameter at a time while holding others fixed, and that computer simulations show a larger fraction of parameter space is life-permitting than proponents of fine-tuning suggest.21 Luke Barnes has responded with a detailed review of the physics literature, concluding that Stenger's objections are largely unsuccessful and that the evidence for fine-tuning remains robust across multiple independent parameters.22

Naturalistic responses and the limits of explanation

Naturalistic approaches to philosophical cosmology seek to account for the existence and properties of the universe without invoking supernatural agency. These approaches range from the modest claim that the universe and its laws are simply brute facts requiring no further explanation to the ambitious claim that physics can in principle explain why there is something rather than nothing.

The "brute fact" position holds that the chain of explanation must terminate somewhere, and that the fundamental laws and initial conditions of the universe may constitute the terminus. On this view, asking why the laws of physics are what they are is like asking why the axioms of mathematics are what they are: the question may be intelligible but may not have an answer of the kind being sought. Bertrand Russell articulated this position in a 1948 BBC debate with Frederick Copleston, declaring that "the universe is just there, and that's all."18

More ambitiously, some physicists have attempted to show that the universe could have arisen from "nothing" through quantum processes. Alexander Vilenkin has proposed a quantum tunnelling model in which the universe originates from a state of "literally nothing," defined as the absence of space, time, and matter. Krauss has argued similarly that the laws of quantum mechanics and gravity make the emergence of a universe not merely possible but inevitable.23 These proposals have been challenged by philosophers who argue that the quantum vacuum or the laws of quantum mechanics are not "nothing" in the relevant sense. As the philosopher David Albert noted in a widely cited review, if the fundamental laws of quantum mechanics exist, then there is not nothing but rather a very particular and structured something.23

Adolf Grünbaum has mounted a different kind of naturalistic critique, arguing that the traditional question "Why is there something rather than nothing?" rests on an unjustified assumption that nothingness is the natural or default state requiring no explanation, while existence is an anomaly demanding one. Grünbaum contends that there is no reason to privilege nothingness over existence in this way, and that the question therefore embodies a "priori ontology" that is philosophically unwarranted.12 On this analysis, the demand for an explanation of the universe's existence is misguided from the outset, and neither theistic nor naturalistic cosmology can provide a satisfying answer because the question itself is defective.

Design, teleology, and the teleological argument

The teleological argument, or argument from design, infers the existence of a purposive intelligence from the order, complexity, or apparent purpose observed in the natural world. In its classical form, as stated by William Paley in 1802, the argument proceeds by analogy: just as a watch implies a watchmaker, the intricate mechanisms of nature imply a cosmic designer. David Hume had already subjected this analogy to devastating criticism in his Dialogues Concerning Natural Religion, arguing that the analogy between natural objects and human artefacts is weak, that order might arise from the inherent properties of matter rather than from external design, and that even if a designer existed, nothing about the argument entails the specific attributes of the God of traditional theism.2

The modern fine-tuning argument represents a substantial reformulation of the teleological argument that avoids many of Hume's objections. Rather than arguing from biological complexity, which has been explained by natural selection since Darwin, the fine-tuning argument focuses on the fundamental constants and laws of physics, which set the stage for all subsequent physical and biological processes. Robin Collins has developed a probabilistic version of the argument: on the assumption that a designer exists, the probability of observing a life-permitting universe is reasonably high, since a designer would plausibly wish to create a universe containing life; on the assumption that no designer exists and the constants are set by chance or physical necessity, the probability of a life-permitting universe is extraordinarily low given the sensitivity of cosmic outcomes to parameter values.20

Richard Swinburne has embedded the fine-tuning argument within a broader cumulative case for theism, arguing that theism provides the simplest explanation for why the laws of physics have the specific form they do, why they are fine-tuned for life, and why there is a universe at all. On Swinburne's Bayesian analysis, the prior probability of theism is reasonable, the likelihood of the evidence given theism is high, and the cumulative force of cosmological, teleological, and other arguments renders theism more probable than not.16

Naturalistic critics respond on multiple levels. Some, like Stenger, dispute the empirical premise by arguing that the degree of fine-tuning has been exaggerated.21 Others accept fine-tuning as genuine but invoke the multiverse as a non-theistic explanation. Still others argue that the fine-tuning argument commits a probabilistic fallacy by assigning probabilities to the constants of nature without a well-defined probability measure over possible universes, rendering the argument formally invalid. The absence of a principled way to define "the space of possible universes" remains one of the deepest unresolved problems in both physics and philosophy of cosmology.18, 22

The boundary between science and philosophy

A recurring theme in philosophical cosmology is the question of where empirical science ends and philosophical or theological interpretation begins. Cosmology occupies a unique position among the sciences: it studies a single, unrepeatable object (the universe as a whole), it cannot perform controlled experiments on its subject, and its most fundamental questions, such as why the laws of physics have the form they do, may lie beyond the reach of any possible observation. George Ellis has articulated this problem with particular clarity, identifying a hierarchy of nine issues in the philosophy of cosmology that range from the uniqueness of the universe and the limits of observational horizons to the nature of existence itself.18

The question of what constitutes a legitimate scientific explanation is central to many of these debates. The multiverse hypothesis, for example, is motivated by well-established theories (inflationary cosmology, string theory, quantum mechanics) but predicts the existence of entities that are, by construction, causally disconnected from our observable universe. Whether this renders multiverse proposals scientific, philosophical, or something else entirely is itself a contested philosophical question. Some physicists, including Ellis and Paul Davies, argue that unfalsifiable multiverse hypotheses should be classified as metaphysics. Others, including Tegmark and Susskind, contend that a theory can be scientific even if some of its predictions are unobservable, provided it is derived from a well-motivated theoretical framework and makes testable predictions at the observable level.15, 18

The same boundary question arises for theological interpretations of cosmological data. The Big Bang model describes the physical history of the universe with extraordinary precision, but it does not, by itself, answer the question of whether that history was initiated by a transcendent cause. The fine-tuning of the constants is an empirical fact, but whether it constitutes evidence for design depends on philosophical assumptions about the nature of explanation and the prior probability of theism. The BGV theorem establishes a past boundary for inflationary spacetimes, but whether this boundary is an absolute beginning of existence or merely the edge of our current physical description is a question that physics alone may not be able to resolve.14, 19

What is clear is that modern cosmology has not rendered philosophical and theological questions about the universe obsolete. If anything, the precision and scope of contemporary physical cosmology have sharpened those questions and made them more difficult to avoid. The universe is 13.8 billion years old, spatially flat to exquisite precision, governed by constants that are fine-tuned for complexity over an extraordinary range, and expanding at an accelerating rate driven by a cosmological constant whose value is 120 orders of magnitude smaller than naive theoretical predictions.24, 8 Whether these facts point toward a purposive intelligence, a vast multiverse, a brute unexplained given, or something else entirely remains one of the deepest open questions at the intersection of science and philosophy. The question "Why is there something rather than nothing?" has not been answered by modern cosmology. It has been made more precise.