Overview

- The fine-tuning problem refers to the observation that several fundamental physical constants and cosmological parameters appear to require values within extremely narrow ranges for the universe to permit the existence of complex structures such as atoms, stars, and life.

- Well-documented examples include the cosmological constant, which is approximately 10⁸⁰ times smaller than naive quantum field theory predictions; the strong nuclear force coupling constant, where a change of a few percent would eliminate carbon or oxygen production in stars; and the proton-neutron mass difference, which must fall within a narrow window for stable hydrogen and stellar nucleosynthesis to occur.

- The major interpretive responses to fine-tuning include the multiverse hypothesis, which posits a vast ensemble of universes with varying constants; the design hypothesis, which infers purposeful calibration; observer selection effects, which note that only life-permitting values can be observed; and the possibility that a deeper physical theory will reveal the constants to be necessary rather than contingent.

In physics and cosmology, fine-tuning refers to the observation that certain fundamental physical constants and initial conditions of the universe appear to require values within remarkably narrow ranges for the cosmos to produce stable atoms, long-lived stars, heavy elements, and ultimately the chemical complexity necessary for life. Were the strength of gravity, the mass of the electron, or the energy of the vacuum even slightly different, the universe would be radically unlike the one observed — in many hypothetical variations, no complex structures would form at all. The phenomenon was first systematically catalogued in the late 1970s by Bernard Carr and Martin Rees, who showed that numerous apparently independent "coincidences" among dimensionless physical constants are prerequisites for the existence of astrophysical structures and observers.1 Since then, the fine-tuning problem has become one of the most intensely discussed topics at the intersection of fundamental physics, cosmology, and philosophy.

Fine-tuning is an empirical observation about the sensitivity of physical outcomes to the values of constants; it is not, by itself, an argument for any particular metaphysical conclusion. The fact that the universe's parameters appear calibrated within narrow life-permitting windows has prompted several major interpretive responses: the hypothesis that our universe is one member of an enormous multiverse in which constants vary; the inference that the constants were intentionally set by a designer; the suggestion that observer selection effects render the observation unsurprising; and the hope that a future theory of everything will reveal the constants to be uniquely determined by deeper principles. Each of these responses has both defenders and critics within the scientific and philosophical communities.9, 24

Definition and scope

A physical constant is said to be fine-tuned when the range of its values compatible with some outcome of interest — typically the existence of complex chemistry, stable stars, or observers — is very small compared to the range of values it might in principle take. The concept presupposes that the constants could, at least in principle, have been different, and that there exists some well-defined measure over the space of possible values against which the life-permitting range can be assessed as narrow.9 Fine-tuning claims are thus inherently counterfactual: they describe what would happen if a constant were changed while other physical laws remained fixed, and they require calculations or simulations of alternative universes to evaluate.

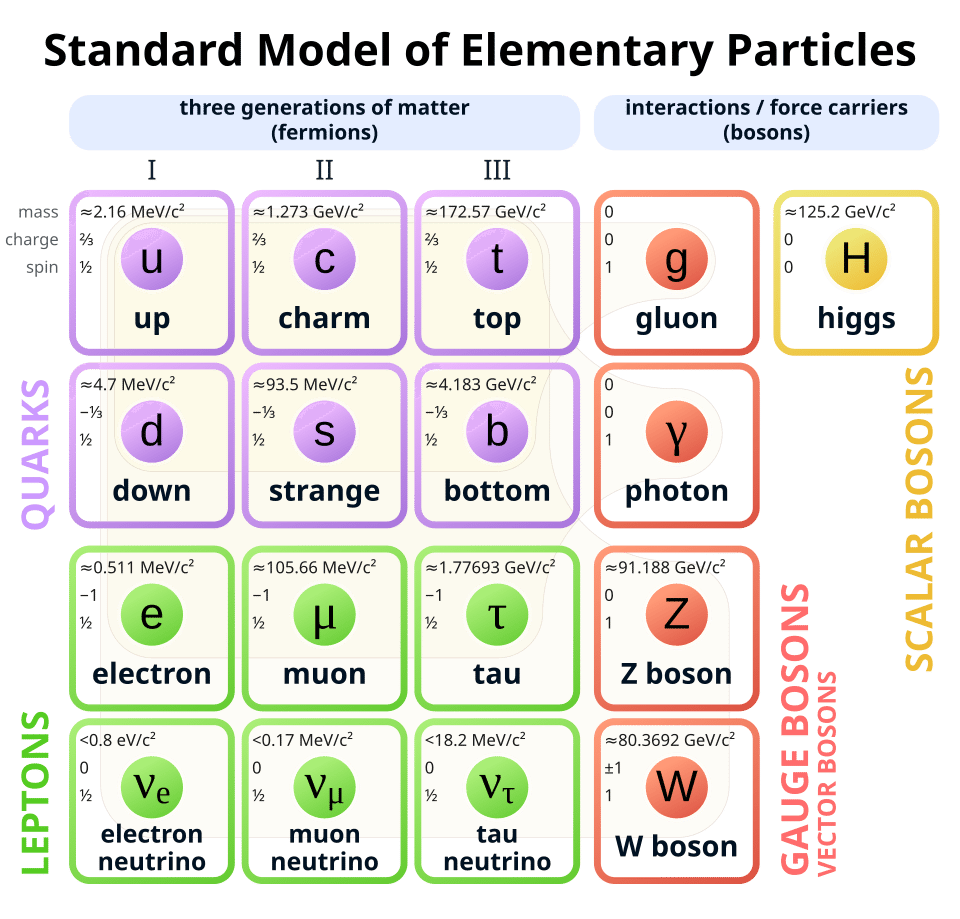

The constants most frequently discussed in the fine-tuning literature include the cosmological constant (the energy density of empty space), the strong nuclear force coupling constant, the electromagnetic fine-structure constant, the gravitational coupling constant, the masses of the elementary particles (particularly the quarks and the electron), and the initial density perturbation amplitude in the early universe.1, 8 Tegmark, Aguirre, Rees, and Wilczek identified 31 dimensionless physical constants required by the Standard Model of particle physics and cosmology, and noted that many of them appear to require values in narrow ranges for the universe to permit complex structures.8 However, not all constants are equally fine-tuned. Some, like the number of spatial dimensions, appear to be constrained by mathematical consistency rather than empirical sensitivity, while others allow considerable variation without catastrophic consequences for complexity.10

Fred Adams's comprehensive 2019 review quantified the degree of fine-tuning across astrophysical and nuclear physics constraints, concluding that while several constants are genuinely tuned within narrow windows — sometimes to one part in 102 or 103 — the overall degree of fine-tuning is less extreme than some popular accounts suggest, and that the sensitivity varies considerably depending on which parameters are varied simultaneously and what criterion for "life-permitting" is adopted.10

The cosmological constant

The most frequently cited and arguably most dramatic example of fine-tuning involves the cosmological constant, denoted Λ, which characterises the energy density of the vacuum and drives the accelerating expansion of the universe. Quantum field theory predicts that the vacuum should possess an enormous energy density arising from zero-point fluctuations of all quantum fields. The naive theoretical estimate of this vacuum energy exceeds the observed value by a factor of roughly 10120, a discrepancy that Steven Weinberg called "the worst failure of an order-of-magnitude estimate in the history of science."2 If the cosmological constant had its theoretically expected value, the universe would have expanded so rapidly that no galaxies, stars, or planets could ever have formed.

The observed value of Λ is not precisely zero but is extremely small and positive, as demonstrated by the 1998 discovery that the expansion of the universe is accelerating.20 Measurements from the Planck satellite constrain the vacuum energy density to approximately 6.9 × 10−30 grams per cubic centimetre, corresponding to a dark energy fraction ΩΛ ≈ 0.69.22 In 1987, Weinberg argued on anthropic grounds that the cosmological constant could not be much larger than the value needed for gravitational collapse to form galaxies, placing an upper bound roughly two orders of magnitude above the matter density of the universe at the epoch of galaxy formation.3 The subsequently observed value fell within this anthropic window, a result that some physicists have cited as a successful anthropic prediction and others have regarded as merely a fortunate coincidence.3, 9

The cosmological constant problem is especially acute because no known symmetry or mechanism within the Standard Model of particle physics explains why the vacuum energy is so extraordinarily close to zero without being exactly zero. Supersymmetry, if it existed at the relevant energy scales, could in principle reduce the discrepancy, but even the most optimistic supersymmetric scenarios leave a residual fine-tuning of many orders of magnitude.2, 23

Nuclear and particle physics constants

Beyond the cosmological constant, several constants governing the strong and electromagnetic interactions display fine-tuning for the production of the chemical elements essential to known forms of complexity. The strong nuclear force, which binds quarks into protons and neutrons and binds nucleons into atomic nuclei, is a particularly well-studied case. In 2000, Oberhummer, Csótó, and Schlattl calculated that a change in the strength of the strong force by as little as 0.5 percent, or in the strength of the electromagnetic (Coulomb) force by approximately 4 percent, would reduce the stellar production of either carbon or oxygen by factors of 30 to 1,000, potentially rendering the universe devoid of the raw materials for organic chemistry.7

The sensitivity of carbon production to the strong force arises from the triple-alpha process, the nuclear reaction sequence by which helium is converted to carbon inside red giant stars. This process proceeds through a resonant excited state of the carbon-12 nucleus at 7.65 MeV above the ground state, known as the Hoyle state after the astrophysicist Fred Hoyle, who predicted its existence in 1953 on the grounds that the observed abundance of carbon in the universe would be inexplicable without such a resonance. The Hoyle state must lie within a narrow energy window above the combined mass of three helium-4 nuclei for the triple-alpha reaction rate to be sufficient; if it were significantly higher or lower, stellar nucleosynthesis would produce negligible carbon.7, 13 Epelbaum and collaborators used nuclear lattice effective field theory to quantify the sensitivity of the Hoyle state energy to the fundamental parameters of nuclear physics, finding that the life-essential proximity of the Hoyle state to the triple-alpha threshold is robust to small variations in quark masses but would fail outside a window of roughly 2 to 3 percent variation in the light quark mass.13

The proton-neutron mass difference provides another well-documented example. The neutron is approximately 1.29 MeV heavier than the proton, a small difference arising from the mass difference between the down and up quarks and from electromagnetic self-energy contributions. If this mass difference were smaller than the electron mass (0.511 MeV), electrons would be captured by protons to form neutrons, and the universe would contain no hydrogen atoms, no stable nuclei beyond neutrons, and no water. If the mass difference were substantially larger, neutrons would decay so rapidly that negligible quantities of heavier elements could form during Big Bang nucleosynthesis, and stellar burning would be radically altered.1, 6 The observed value appears to lie within a narrow range that simultaneously permits stable hydrogen, stable heavy nuclei, and the nuclear reactions that power stars.

Craig Hogan's review identified five parameters of the Standard Model upon which the basic structures of the world — nucleons, nuclei, atoms, molecules, planets, and stars — principally depend, noting that three of these appear to be unconstrained by any known symmetry and yet occupy a narrow window compatible with complex chemistry.6

Sensitivity of selected physical constants to life-permitting variation7, 9, 10

The chart illustrates the approximate permitted fractional variation for selected constants before carbon production, stellar stability, or nuclear physics would fail catastrophically. The strong force coupling is the most tightly constrained, while the gravitational coupling constant permits wider variation (though still within bounds set by stellar lifetime and planetary stability requirements).7, 10

Cosmological initial conditions

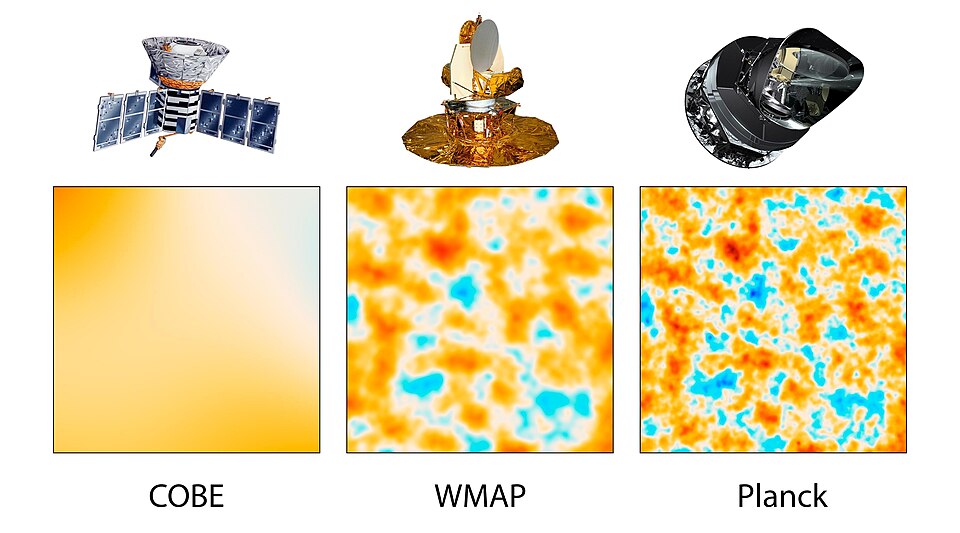

Fine-tuning extends beyond the values of coupling constants to the initial conditions of the universe itself. The amplitude of primordial density perturbations, conventionally denoted Q, is measured to be approximately 2 × 10−5 from observations of the cosmic microwave background.22

If Q were substantially smaller, the density fluctuations seeded during cosmic inflation would have been too weak for gravitational collapse to produce galaxies and stars within the age of the universe. If Q were much larger, the universe would have collapsed into dense structures too quickly, producing an overabundance of black holes and an environment hostile to the formation of long-lived planetary systems.1, 16

Martin Rees, in his book Just Six Numbers, identified six dimensionless constants whose values determine the essential features of the physical universe: the ratio of the electromagnetic to gravitational force strength (N ≈ 1036), the fraction of mass converted to energy in nuclear fusion (ε ≈ 0.007), the cosmic density parameter (Ω), the cosmological constant (Λ), the amplitude of density perturbations (Q ≈ 10−5), and the number of spatial dimensions (D = 3).16 Rees argued that if any of these numbers were very different, the universe would be incapable of generating the conditions necessary for complexity and life.

The spatial flatness of the universe — the observation that the total energy density is extremely close to the critical density, with Ωtotal measured by Planck to be 1.0007 ± 0.0019 — was historically considered a fine-tuning problem in its own right.22 In the standard Big Bang model without inflation, any deviation of Ω from unity at early times would have grown rapidly, requiring the initial density to be tuned to one part in 1060 or more for the universe to remain flat for 13.8 billion years. Cosmic inflation resolves this particular fine-tuning by dynamically driving the universe toward flatness during a period of exponential expansion in the first fraction of a second, regardless of the initial conditions.15 However, inflation itself requires specific initial conditions for the inflaton field, and whether these constitute a new fine-tuning problem remains debated.10, 18

The anthropic principle

The anthropic principle, first formally articulated by Brandon Carter in 1974, provides a framework for interpreting the apparent fine-tuning of the constants. Carter distinguished between two formulations.4

The weak anthropic principle states that the observed values of the physical constants must be compatible with the existence of observers, since we could not be present to measure the constants in a universe where observers cannot exist. This formulation is essentially a statement of selection bias: any universe that is observed necessarily satisfies the conditions for the existence of at least one observer. The weak anthropic principle is logically trivial and scientifically uncontroversial, though its explanatory power is debated.4, 5

The strong anthropic principle, as stated by Carter, asserts that the universe "must be such as to admit the creation of observers within it at some stage."4 This formulation is more contentious, as it appears to imply that the universe is in some sense compelled to produce observers — a claim that goes beyond observational selection and ventures into metaphysical territory. Barrow and Tipler, in their comprehensive 1986 monograph The Anthropic Cosmological Principle, catalogued the physical and cosmological coincidences that appear necessary for the existence of observers and explored several versions of the strong anthropic principle, including a "final anthropic principle" that posits the universe must produce intelligent information processing.5 The strong anthropic principle in its various formulations has been criticised as teleological, unfalsifiable, or vacuous, though its defenders argue that it expresses a genuine feature of the relationship between physical law and the existence of observers.5, 9

It is important to distinguish the anthropic principle as a selection effect from the broader interpretive claims that are sometimes attached to it. The weak anthropic principle, by itself, does not explain why the constants have life-permitting values; it merely notes that the observation of such values is unsurprising given that we exist to make the observation. Whether this selection effect constitutes a satisfying explanation depends on additional assumptions about the ensemble of possibilities from which our universe is drawn.9, 17

The multiverse hypothesis

The most widely discussed scientific response to the fine-tuning problem is the multiverse hypothesis, which posits that the observable universe is only one of an enormous — perhaps infinite — ensemble of universes, each with different values of the physical constants. If such an ensemble exists and if the constants vary across its members, then it is statistically unsurprising that at least one universe possesses life-permitting values, and the weak anthropic principle explains why we observe ourselves in such a universe rather than in one of the vastly more numerous sterile members of the ensemble.11, 17

Two developments in theoretical physics have given the multiverse hypothesis a concrete physical basis. The first is eternal inflation, the idea that the inflationary expansion of space, once initiated, generically continues in some regions of the universe even after it ends locally. In the eternal inflation framework, different regions of space undergo inflation at different times and may settle into different vacuum states when inflation ends, producing a vast collection of causally disconnected "bubble universes" or "pocket universes," each potentially characterised by different effective physical constants.15, 21

The second development is the string theory landscape. In 2000, Bousso and Polchinski showed that the compactification of extra spatial dimensions in string theory, combined with the quantization of gauge fluxes threading these dimensions, generates an enormous number of metastable vacuum states — an estimated 10500 or more distinct configurations, each corresponding to a different set of effective low-energy physics, including different values of the cosmological constant.12 Susskind coined the term "landscape" for this vast space of possible string vacua and argued that the combination of the landscape with eternal inflation provides a mechanism for populating all possible vacua across the multiverse, transforming the cosmological constant problem from a question of fundamental dynamics into an environmental selection effect.11

Critics of the multiverse hypothesis raise several objections. The other members of the multiverse, if they exist, are causally disconnected from our universe and thus cannot be directly observed or falsified by experiment, leading some physicists and philosophers to question whether the multiverse is a genuinely scientific hypothesis or a metaphysical speculation dressed in the language of physics.14 Furthermore, making probabilistic predictions within the multiverse requires a measure — a way of assigning relative weights or probabilities to different vacua — and no consensus exists on the correct measure, a difficulty known as the measure problem. Different measures yield different predictions, and some yield absurd or paradoxical results, casting doubt on the predictive power of the landscape approach.11, 14

The design argument

The apparent fine-tuning of the universe has also been invoked as evidence for purposeful design — the idea that the physical constants were intentionally calibrated to permit the emergence of life and complexity. This is a modern form of the teleological argument, one of the oldest lines of reasoning in natural theology. Proponents argue that the extreme improbability of the observed combination of constants, on the assumption of chance, constitutes evidence for a designing intelligence, just as the intricate structure of a mechanical watch implies a watchmaker.5, 17

The philosopher John Leslie articulated a widely discussed version of this argument using the analogy of a firing squad. If fifty trained marksmen all fire at a prisoner and every one of them misses, the prisoner should not dismiss the outcome with the remark that, had they not all missed, he would not be alive to wonder about it. The improbability of the outcome demands an explanation even after conditioning on the prisoner's survival — either the marksmen deliberately missed, or there were many firing squads and the prisoner happened to be in front of the only one that missed.17 By analogy, the fine-tuning of the constants demands an explanation beyond the bare observation that we exist, and Leslie argued that the two serious candidates are design and a multiverse, without advocating decisively for either one.

The design hypothesis faces its own philosophical challenges. It does not specify the nature, motives, or mechanisms of the hypothesised designer, leaving it without predictive content beyond the assertion that the constants should be life-permitting — which is already known to be the case. Critics also raise the question of whether the designer would itself require fine-tuning or explanation, generating an infinite regress.9 Proponents respond that the design inference is an inference to the best explanation rather than a deductive proof, and that not all explanations need themselves be explained for the original explanatory inference to be rational.17, 24 The design argument from fine-tuning remains a topic of active discussion in the philosophy of religion and in philosophical cosmology, distinct from and more narrowly focused than the broader intelligent design movement in biology.

Scientific critiques and methodological debates

Several lines of critique challenge the cogency of fine-tuning claims on scientific and methodological grounds. One prominent objection concerns observer selection bias: since we can only observe a universe in which observers exist, we should not be surprised to find the constants life-permitting, just as a fish should not be surprised to find itself in water. This objection is essentially an application of the weak anthropic principle, and its force depends on whether fine-tuning is merely about what we observe or about the objective probability of the observed values in the space of possibilities.4, 9

A related challenge arises from the measure problem in parameter space. Fine-tuning claims assert that the life-permitting range of a constant is small compared to the full range it could take, but the latter quantity is often ill-defined. What counts as the "full range" of the strong force coupling constant or the cosmological constant? Without a well-motivated measure or probability distribution over the space of possible values, the claim that the life-permitting range is "narrow" lacks a rigorous foundation. Different choices of measure can make the same range appear either negligibly small or entirely typical.9, 10

A further critique challenges the assumption that physical constants can be varied independently. In actual physical theories, the constants are often linked by renormalization group equations, symmetry relations, or other theoretical constraints. Varying one constant while holding all others fixed may correspond to no physically realizable scenario, making the counterfactual analysis of fine-tuning misleading.14, 23 Donoghue has argued that the fine-tuning problems of particle physics — including the cosmological constant and the Higgs mass — may be artefacts of our current theoretical framework rather than genuine features of nature, and that they may dissolve once a more complete theory is found.23

Adams's 2019 review found that the degree of fine-tuning varies considerably across different physical parameters and depends sensitively on what counts as "life-permitting." When multiple constants are varied simultaneously, the fraction of parameter space compatible with the existence of stars and complex chemistry is larger than one might infer from varying each constant in isolation, because alternative stellar configurations and nucleosynthetic pathways can partially compensate for changes in individual parameters.10 For example, universes with weaker gravity can still produce functional stars if the nuclear reaction rates are suitably adjusted, and universes without a stable diproton can still produce heavy elements through alternative nuclear pathways.10, 19

Necessity arguments and the search for a deeper theory

A fourth interpretive response to fine-tuning holds that the constants are not contingent but necessary — that a complete theory of fundamental physics will ultimately derive the values of all physical constants from first principles, leaving no free parameters to be fine-tuned. On this view, the apparent fine-tuning is an artefact of our incomplete understanding rather than a genuine feature of nature. Just as the orbit of the Earth does not require fine-tuning because it is determined by the laws of gravitational dynamics and the initial conditions of the solar system, the constants of particle physics may be determined by a deeper theory that we have not yet discovered.14, 24

This hope has a long history in theoretical physics. Einstein famously sought a unified field theory that would uniquely determine all constants of nature, and the modern quest for a theory of everything — whether through string theory, loop quantum gravity, or some as-yet-unknown framework — is motivated in part by the desire to explain why the constants take the values they do. However, the development of the string theory landscape has undercut this programme within the context of string theory itself, because the landscape appears to contain an enormous number of solutions with different effective constants, suggesting that string theory does not uniquely determine a single set of low-energy physics.11, 14

Some physicists have proposed that the constants may be determined by a principle of mathematical consistency or self-selection. For example, if certain combinations of constants lead to mathematical inconsistencies or physical instabilities, these could be excluded on purely theoretical grounds, potentially narrowing the space of allowed values. However, no such principle has yet been identified that would uniquely select the observed values, and the extent to which mathematical consistency constrains the constants remains an open question.8, 14

The relationship between necessity arguments and the multiverse hypothesis is noteworthy. If the constants are genuinely necessary — uniquely determined by the fundamental theory — then the fine-tuning problem dissolves, but so does the motivation for a multiverse of varying constants. Conversely, if the constants are environmental variables that differ across the landscape, necessity arguments are inapplicable, and the anthropic or design explanations come to the fore. The two approaches are thus in tension, and the resolution depends on the ultimate structure of fundamental physics, which remains unknown.14, 24

Current status and ongoing research

The fine-tuning of physical constants remains an active area of research in both physics and philosophy. On the empirical side, increasingly precise measurements of cosmological parameters by surveys such as Planck have sharpened the constraints on the cosmological constant, the density perturbation amplitude, and the spatial curvature, confirming that these quantities lie within narrow windows compatible with the formation of large-scale structure.22 On the theoretical side, advances in nuclear lattice effective field theory have enabled more rigorous calculations of how sensitive stellar nucleosynthesis is to variations in the fundamental constants, yielding quantitative bounds on the life-permitting ranges of quark masses and force strengths.13

The philosophical literature has grown substantially, with detailed analyses of the logical structure of fine-tuning arguments, the conditions under which observer selection effects are or are not adequate explanations, and the epistemological status of multiverse theories. Livio and Rees have emphasised that the fine-tuning discussion must carefully distinguish between parameters that are genuinely fine-tuned (in the sense of having narrow life-permitting ranges supported by detailed calculations) and those that are merely asserted to be fine-tuned on the basis of rough or incomplete analysis.19 Schellekens has reviewed the status of anthropic reasoning at the interface of particle physics and string theory, concluding that while the landscape provides a framework for understanding environmental selection, it remains far from a predictive theory.14

At present, no consensus exists among physicists or philosophers on the correct interpretation of fine-tuning. The multiverse hypothesis, the design hypothesis, the necessity hypothesis, and the observer-selection-effect response each have articulate defenders and unresolved difficulties. What is broadly accepted is the empirical observation itself: the laws of physics as currently understood contain free parameters, and the values of several of those parameters must lie within narrow ranges for the universe to produce the complex structures that are observed. Whether this observation points toward a vast ensemble of universes, a purposeful creator, an as-yet-unknown fundamental theory, or simply a brute fact about reality remains one of the deepest open questions in science and philosophy.9, 10, 24

References

Quantization of four-form fluxes and dynamical neutralization of the cosmological constant

Observational evidence from supernovae for an accelerating universe and a cosmological constant